ZIP is a great format—it’s extremely broadly deployed, relatively simple, and supports a wide variety of use-cases pretty well. ZIP is the underlying format beneath Java (.jar) Archives, Office (docx/xlsx/pptx) files, Fiddler (.saz) Session Archive ZIP files, and many more.

Even though some features (Unicode filenames, AES encryption, advanced compression engines) aren’t supported by all clients (particularly Windows Explorer), basic support for ZIP is omnipresent. There are even solid implementations in JavaScript (optionally utilizing asmjs), and discussion of adding related primitives directly to the browser. 2024 Update: I made a simple WebApp for zipping files.

I learned a fair amount about the ZIP format when building ZIP repair features in Fiddler’s SAZ file loader. Perhaps the most interesting finding is that each individual file within a ZIP is compressed on its own, without any context from files already added to the ZIP. This means, for instance, that you can easily remove files from within a ZIP file without recompressing anything—you need only delete the removed entries and recompute the index. However, this limitation also means that if the data files you’re compressing contain a lot of interfile redundancy (duplicated data across multiple files), the compression ratio does not improve as it would if there were intrafile redundancy (duplicate data in a single file).

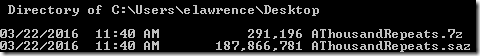

This limitation can be striking in cases like Fiddler, where there may be a lot of repeated data across multiple Sessions. In the extreme case, consider a SAZ file with 1000 near-identical Sessions. When that data is compressed to a SAZ, it is 187 megabytes. If the data were instead compressed with 7-Zip, which shares a compression context across embedded files, the output is 99.85% smaller!

In most cases, of course, the Session data is not identical, but web traffic sessions on the whole tend to contain a lot of redundancy, particularly when you consider HTTP headers and the Session metadata XML files.

The takeaway here is that when you look at compression, the compression context is very important to the resulting compression ratio. This fact rears its head in a number of other interesting places:

- brotli compression achieves high-compression ratios in part by using a 16 megabyte sliding window, as compared to the 32kb window used by nearly all DEFLATE implementations. This means that brotli content can “point back” much further in the already-compressed data stream when repeated data is encountered.

- brotli also benefits by pre-seeding the compression context with a 122kb static dictionary of common web content; this means that even the first bytes of a brotli-compressed response can benefit from existing context.

- SDCH compression achieves high-compression ratios by supplying a carefully-crafted dictionary of strings that result in an “ideal” compression context, so that later content can simply refer to the previously-calculated dictionary entries.

- Adding context introduces a key tradeoff, however, as the larger a compression context grows, the more memory a compressor and decompressor tend to require.

- While HTTP/2 reduces the need to concatenate CSS and JS files by reducing the performance cost of individual web requests, HTTP response body compression contexts are still per-resource. That means that larger files tend to yield higher-compression ratios. See “Bundling Improves Compression.”

- Compression contexts can introduce information disclosure security vulnerabilities if a context is shared between “secret” and “untrusted” content. See also CRIME, BREACH, and HPACK. In these attacks, the bad guy takes advantage of the fact that if his “guess” matches some secret string earlier in the compression context, it will compress better (smaller) than if his guess is wrong. This attack can be combatted by isolating compression contexts by trust level, or by introducing random padding to frustrate size analysis.

Want to learn much more about ZIP files? Check out these two great posts; or you can learn more about the author of PKZIP in this great (sad) video.

Ready to learn a lot more about compression on the web? Check out my earlier Compressing the Web blog, my Brotli blog, and the excellent Google Developers CompressorHead video series.

-Eric