As we’ve been working to replatform the new Microsoft Edge browser atop Chromium, one interesting outcome has been early exposure to a lot more bugs in Chromium. Rapidly root-causing these regressions (bugs in scenarios that used to work correctly) has been a high-priority activity to help ensure Edge users have a good experience with our browser.

Stabilization via Channels

Edge’s code stabilizes as it flows through release channels, from the developer’s-only ToT/HEAD (The main branch’s tip-of-tree, the latest commit in the source repository) to the Canary Channel build (updated daily) to the Dev Channel updated weekly, to the Beta Channel (updated a few times over its six week lifetime) to our Stable Channel.

Until recently, Microsoft Edge was only available in Canary and Dev channels, which meant that any bugs landed in Chromium would almost immediately impact almost all users of Edge. Even as we added a Beta channel, we still found users reporting issues that “reproduce in all Edge builds, but not in Chrome.“

As it turns out, most of the “not in Chrome” comparisons turn out to mean that the problems are “not repro in Chrome Stable.” And that’s because the either the regressions simply haven’t made it to Stable yet, or because the regressions are hidden behind Feature Flags that are not enabled for Chrome’s stable channel.

A common example of this is LayoutNG, a major update to the layout engine used in Chromium. This redesigned engine is more flexible than its predecessor and allows the layout engineers to more easily add the latest layout features to the browser as new standards are finalized. Unfortunately, changing any major component of the browser is almost certain to lead to regressions, especially in corner cases. Google had enabled LayoutNG by default in the code for Chrome 76 (and Edge picked up the change), but then subsequently Google used the Feature Flag to disable LayoutNG for the stable channel three days before Chrome 76 shipped. As a result, the new LayoutNG engine is on-by-default for Chrome Beta, Dev, and Canary and Edge Beta, Dev, and Canary.

The key difference was that until January 2020, Edge didn’t yet have a public stable channel to which any bug-impacted users can retreat. Therefore, reproducing, isolating, and fixing regressions as quickly as possible is important for Edge engineers.

Isolating Regressions

When we receive a report of a bug that reproduces in Microsoft Edge, we follow a set of steps for figuring out what’s going on.

Check Latest Canary

The first step is checking whether it reproduces in our Edge Canary builds.

If not, then it’s likely the bug was already found and fixed. We can either tell our users to sit tight and wait for the next update, or we can search on CRBug.com to figure out when exactly the fix went in and inform the user specifically when a fix will reach them.

Check Upstream

If the problem still reproduces in the latest Edge Canary build, we next try to reproduce the problem in the equivalent build of Chrome or plain-vanilla Chromium.

If Not Repro in Chromium?

If the problem does not reproduce in Chromium, this implies that the problem was likely caused by Microsoft’s modifications to the code in our internal branches. However, it might also be the case that the problem is hidden in upstream Chrome behind an experimental flag, so sometimes we must go spelunking into the browser’s Feature Flag configuration by visiting:

chrome://version/?show-variations-cmd

The Command-Line Variations section of that page reveals the names of the experiments that are enabled/disabled. Launching the browser with a modified version of the command line enables launching Chrome in a different configuration2.

If the issue really is unique to Edge, we can use git blame and similar techniques on our code to see where we might have caused the problem.

If Repro in Chromium?

If the problem does reproduce in Chrome or Chromium, that’s strong evidence that we’ve inherited the problem from upstream.

Sanity Check: Does it Repro in Firefox?

If the problem isn’t a crashing bug or some other obviously incorrect behavior, I will first check the site’s behavior in the latest Firefox Nightly build, on the off chance that the browsers are behaving correctly and the site’s markup or JavaScript is actually incorrect.

Try tweaking common Flags

Depending on the area where the problem occurs, a quick next step is to try toggling Feature Flags that seem relevant on the chrome://flags page. For instance, if the problem is in layout, try setting chrome://flags/#enable-layout-ng to Disabled. If the problem seems to be related to the network, try toggling chrome://flags/#network-service-in-process, and so on.

Understanding whether the problem can be impacted by flags enables us to more quickly find its root cause, and provides us an option to quickly mitigate the problem for our users (by adjusting the flag remotely from our experimental configuration server).

Bisecting Regressions

The gold standard for root-causing regressions is finding the specific commit (aka “change list” aka “pull request” aka “patch”) that introduced the problem. When we determine which commit caused a problem, we not only know exactly what builds the problem affects, we also know what engineer committed the breaking change, and we know what exactly code was changed– often we can quickly spot the bug within the changed files.

Fortunately, Chromium engineers trying to find regressing commits have a super power, known as bisect-builds.py. Using this script is simple: You specify the known-bad Chromium version and a guesstimate of the last known good version. You specify the version using either a milestone (if it has reached stable) e.g. M80, a full version string (1.2.3.4), or the commit position (a six digit integer you can find using OmahaProxy or on the chrome://version page in Chromium builds):

python tools/bisect-builds.py -a win -g M80 -b 92.0.4505.0 --verify-range --use-local-cache -- --no-first-run https://example.com

The script then automates the binary search of the builds between the two positions, requiring the user to grade each trial as “Good” or “Bad”, until the introduction of the regression is isolated.

The simplicity of this tool belies its magic— or, at least, the power of the log2 math that underlies it. OmahaProxy informs me that the current Chrome tree contains 692,278 commits. log2(692278) is 19.4, which means that we should be able to isolate any regressing change in history in just 20 trials, taking a few minutes at most1. And it’s rare that you’d want to even try to bisect all of history– between stable and ToT we see ~27250 commits, so we should be able to find any regression within such a range in just 15 trials.

On CrBug.com, regressions awaiting a bisect are tagged with the label Needs-Bisect, and a small set of engineers try to burn down the backlog every day. But running bisects is so easy that it’s usually best to just do it yourself, and Edge engineers are doing so constantly.

One advantage available to Googlers but not the broader Chromium community is the ability to do what’s called a “Per-Revision Bisect.” Inside Google, Chrome builds a new build for every single change (so they can unambiguously flag any change that impacts performance), but not all of these builds are public. Publicly, Google provides the Chromium Build Archive4, an archive of builds that are built approximately every one to fifteen commits. That means that when we non-Googlers perform bisects, we often don’t get back a single commit, but instead a regression range. We then must look at all of the commits within that range to figure out which was the culprit. In my experience, this is rarely a problem– most commits in the range obviously have nothing to do with the problem (“Change the icon for some far off feature”), so there are rarely more than one or two obvious suspects.

The Edge team does not currently expose our Edge build archives for bisects, but fortunately almost all of our web platform work is contributed upstream, so bisecting against Chromium is almost always effective.

A Recent Bisect Case Study

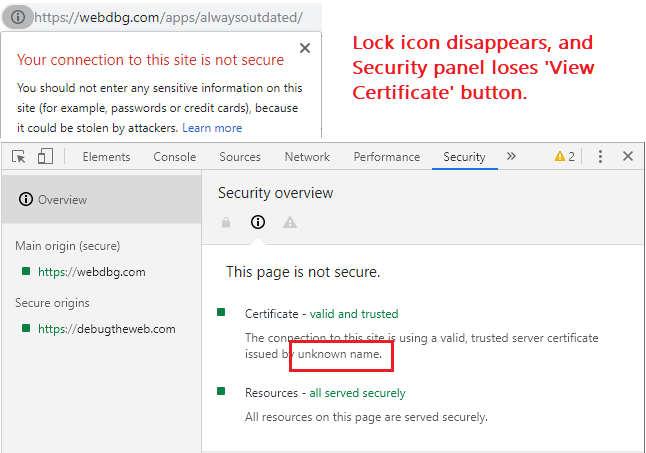

Last Thursday, we started to get reports of a “missing certificate” problem in Microsoft Edge, whereby the browser wasn’t showing the expected Lock icon for HTTPS pages that didn’t contain any mixed content:

While the lock was missing for some users, it was present for others. After also reproducing the issue in Chrome itself, I filed a bug upstream and began investigating.

Back when I worked on the Chrome Security team, we saw a bunch of bugs that manifested like this one that were caused by refactoring in Chrome’s navigation code. Users could hit these bugs in cases where they navigated back/forward rapidly or performed an undo tab close operation, or sites used the HTML5 History API. In all of these cases, the high-level issue is that the page’s certificate is missing in the security_state: either the ssl_status on the NavigationHandle is missing or it contains the wrong information.

This issue, however, didn’t seem to involve navigations, but instead was hit as the site was loaded and thus it called to mind a more recent regression from back in March, where sites that used AppCache were missing their lock icon. That issue involved a major refactoring to use the new Network Service.

One fact that immediately jumped out at me about the sites first reported to hit this new problem is that they all use ServiceWorker (e.g. Hotmail, Gmail, MS Teams, Twitter). Like AppCache, ServiceWorker allows the browser to avoid hitting the network in response to a fetch. As with AppCache, that characteristic means that the the browser must somehow have the “original certificate” for that response from the network so it can set that certificate in the security_state when it’s needed. In the case of navigations handled by ServiceWorkers, the navigated page inherits the certificate of the controlling ServiceWorker’s script.

But where does that script’s certificate get stored?

Chromium stores the certificate for a given HTTPS response after the end of the cache entry, so it should be available whenever the cached resource is used3. A quick disk search revealed that Edge’s ServiceWorker code stores its scripts inside the folder:

%localappdata%\Microsoft\Edge SxS\User Data\Default\Service Worker\ScriptCache

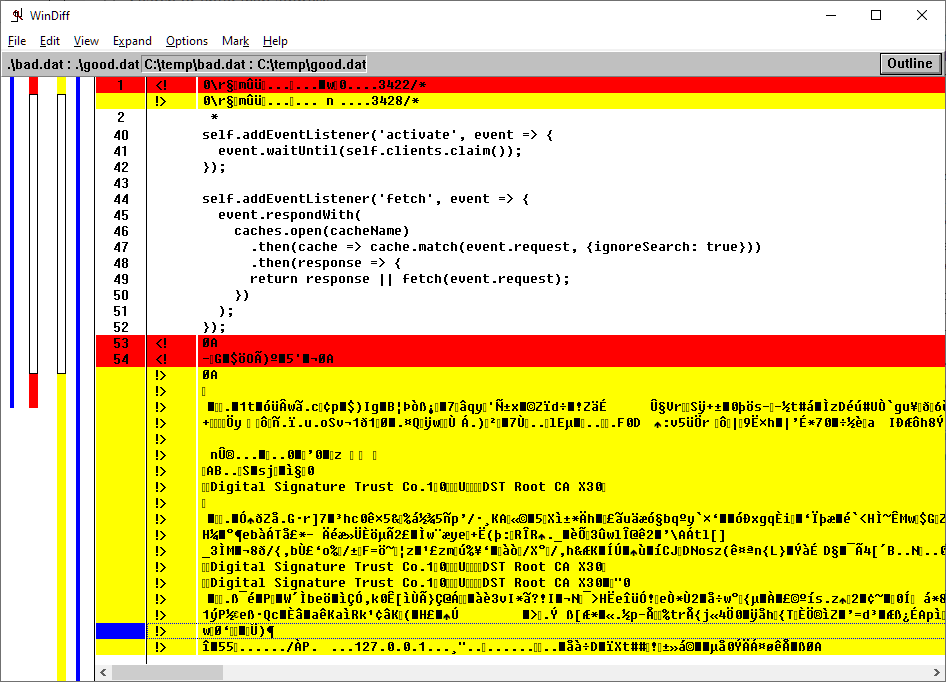

Comparing the contents of the cache file on a “good” and a “bad” PC, we see that the certificate information is missing in the cache file for a machine that reproduces the problem:

So, why is that certificate missing? I didn’t know.

I performed a bisect three times, and each time I ended up with the same range of a dozen commits, only one of which had anything to do with anything to do with caching, and that commit was for AppCache, not ServiceWorker.

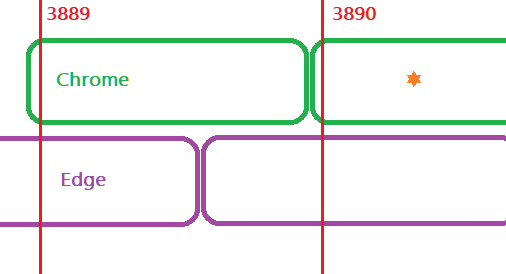

More damning for my bisect suspect was the fact that this suspect commit landed (in 78.0.3890) the day after the build (3889) upon which the reproducing Edge build was based. I spent a bunch of time figuring out whether this could be the off-by-one issue in Edge build numbering before convincing myself that no, it couldn’t be: build number misalignment just means that Edge 3889 might not contain everything that’s in Chrome 3889.

Unless an Edge Engineer had cherry-picked the regressing commit into our 3889 (unlikely), the suspect couldn’t be the culprit.

I posted my research into the bug at 10:39 PM on Friday and forty minutes later a Chrome engineer casually revealed something I didn’t realize: Chrome uses two different codepaths for fetching a ServiceWorker script– a new_script_loader and an updated_script_loader.

And instantly, everything fell into place. The reason the repro wasn’t perfectly reliable (for both users and my bisect attempts) was that it only happened after a ServiceWorker script updates.

- If the ServiceWorker in the user’s cache is missing, it is downloaded by the new_script_loader and the certificate is stored.

- If the ServiceWorker script is present and is unchanged on the server, the lock shows just fine.

- But if the ServiceWorker in the user’s cache is present but outdated, the updated_script_loader downloads the new script… and omits the certificate chain. The lock icon disappears until the user clears their cache or performs a hard (

CTRL+F5) refresh, at which point the lock remains until the next script update.

With this new information in hand, building a reliable reduced repro case was easy– I just ripped out the guts of one of my existing PWAs and configured it so that it updated itself every five seconds. That way, on nearly every load, the cached ServiceWorker would be deemed outdated and the script redownloaded.

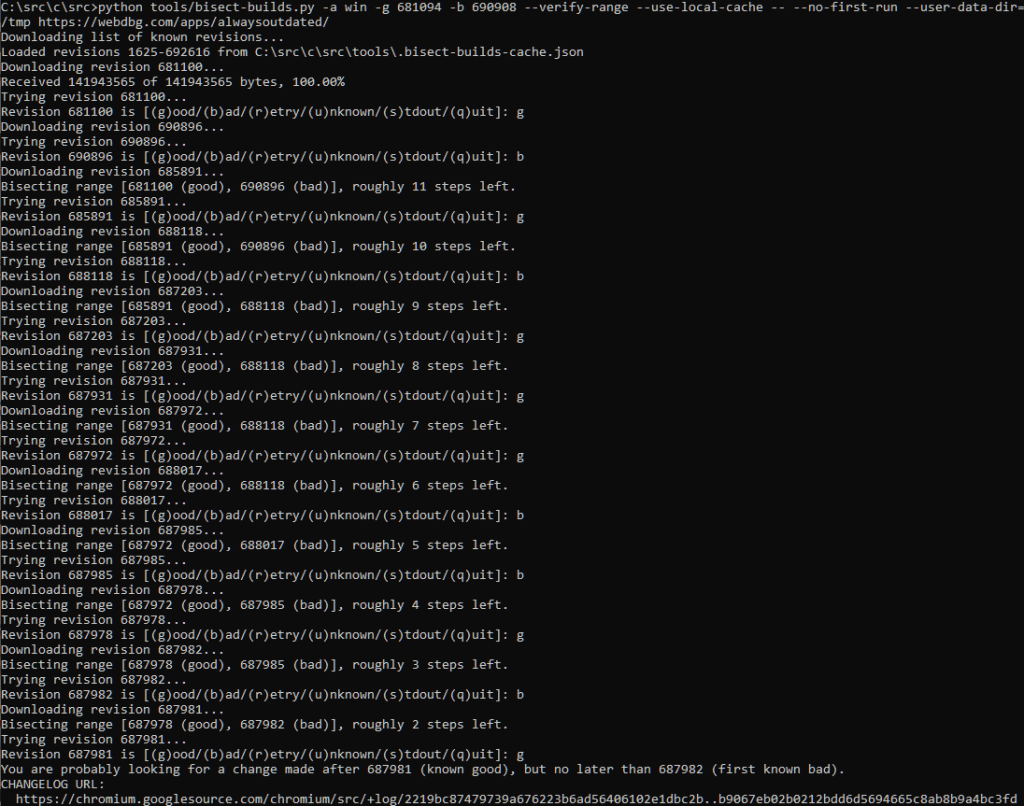

With this repro, we can kick off our bisect thusly:

python tools/bisect-builds.py -a win -g 681094 -b 690908 --verify-range --use-local-cache -- --no-first-run --user-data-dir=/temp https://webdbg.com/apps/alwaysoutdated/

… and grade each build based on whether the lock disappears on refresh:

Within a few minutes, we’ve identified the regression range:

In this case, the regression range contains just one commit— one that turns on the new ServiceWorker update check code. This confirms the Chromium engineer’s theory and that this problem is almost identical to the prior AppCache bug. In both cases, the problem is that the download request passed kURLLoadOptionNone and that prevented the certificate from being stored in the HttpResponseInfo serialized to the cache file. Changing the flag to kURLLoadOptionSendSSLInfoWithResponse results in the retrieval and storage of the ssl_info, including the certificate.

The fix was quick and straightforward; it will be available in Chrome 78.0.3902 and the following build of Edge based on that Chromium version. Notably, because the bug is caused by failure to put data in a cache file, the lock will remain missing even in later builds until either the ServiceWorker script updates again, or you hard refresh the page once.

-Eric

1 By way of comparison, when I last bisected an issue in Internet Explorer, circa 2012, it was an extraordinarily painful two-day affair.

2 You can use the command line arguments in the variations page (starting at --force-fieldtrials=) to force the bisect builds to use the same variations.

Chromium also has a bisect-variations script which you can use to help narrow down which of the dozens of active experiments is causing a problem.

If all else fails, you can also reset Chrome’s field trial configuration using chrome.exe --reset-variation-state to see if the repro disappears.

3 Aside: Back in the days of Internet Explorer, WinINET had no way to preserve the certificate, so it always bypassed the cache for the first request to a HTTPS server so that the browser process would have a certificate available for its subsequent display needs.

4 I’ve copied a dozen of these builds to a folder on my Desktop to do quick manual checks when I don’t want to get all the way into a full bisect.