Many interesting problems in software design boil down to “I need my client application to know a secret, but I don’t want the user of that application (or malware) to be able to learn that secret.“

Some examples include:

- Digital Rights Management encryption keys

- Passwords stored in a Password Manager

- API keys to use web services

- Private keys for local certificate roots

- Configuration options (e.g. exclusions) for security software

…and likely others.

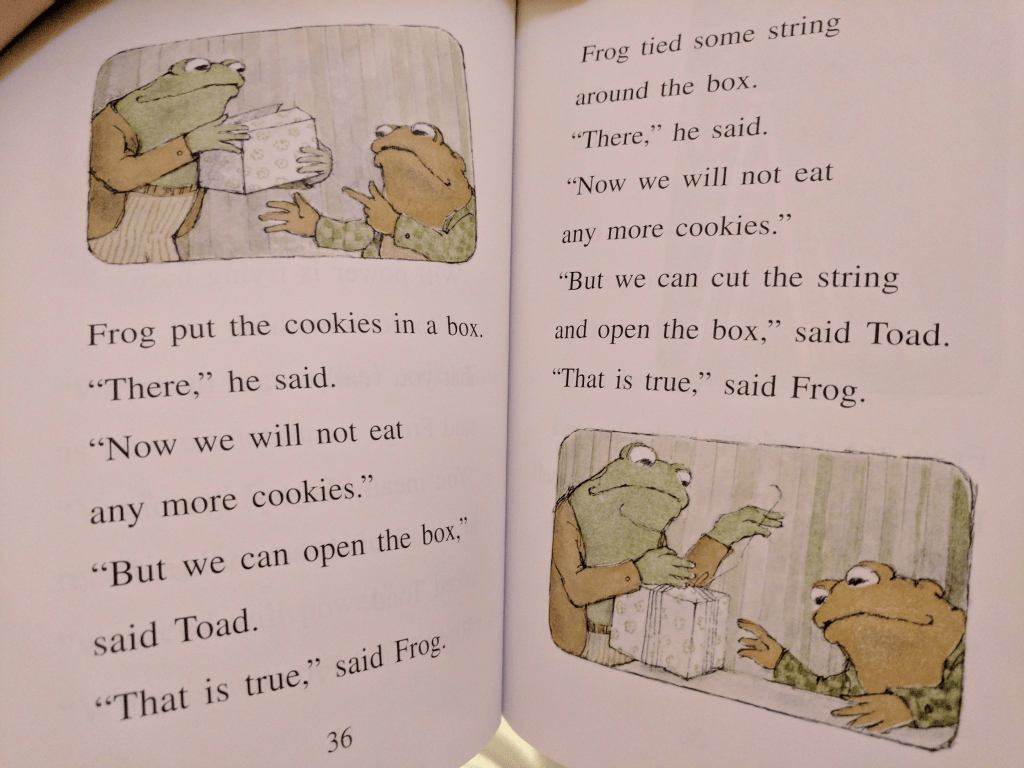

In general, if your design relies on having a client protect a secret from a local attacker, you are doomed. As eloquently outlined in the story “Cookies” in 1971’s Frog and Toad Together, anything the client does to try to protect a secret can also be undone by the client:

For example, a user can easily read passwords filled by a password manager out of the browser’s DOM, or malware can read them from memory, or read them out of the browser’s encrypted storage when the malware runs inside the user’s account with their encryption key. An attacker can read keys by viewing or debugging a binary that contains them, or it can watch API keys flow by in outbound network traffic (even if HTTPS is used). A “sufficiently/extremely motivated” attacker with physical access to a device can steal hardware-stored encryption keys directly off the hardware. Et cetera.

However, just because a problem cannot be solved does not mean that developers won’t try.

“Trying” isn’t entirely madness — believing that every would-be attacker is “sufficiently motivated” is as big a mistake as believing that your protection scheme is truly invulnerable. If you can raise the difficulty level enough at a reasonable cost (complexity, performance, etc), it may be entirely rational to do so.

Some approaches include:

- Move the encryption key off the client. E.g. instead of having your client call the service provider’s web-service directly, have it call a proxy on your own website that adds the key before forwarding along the request. Of course, an attacker might still masquerade as your application (or automate it) to hit the service through your proxy, but at least they will be constrained in what calls they can make, and you can apply rate-limits, IP reputation, etc. to mitigate abuse.

- Replace the key with short-lived tokens that are issued by an authenticated service. E.g. the Microsoft Edge VPN feature requires that the user be logged in with their Microsoft account (MSA) to use the VPN. The feature uses the user’s credentials to obtain tokens that are good for 1GB of VPN traffic quota apiece. An attacker wishing to abuse the VPN service has to generate fake Microsoft accounts, and there are robust investments in making that non-trivially expensive for an attacker.

- Use hardware to make stealing secrets more difficult. For example, you can store a private key inside a TPM which makes it very difficult to steal and move to a different device. Keep in mind that locally-running malware could still use the key by treating the compromised device as a sock puppet.

- Similarly, you can use a Secure Enclave/Virtual Secure Mode (available on some devices) to help ensure that a secret cannot be exported and try[1] to establish controls on what processes can request the enclave use the key for some purpose. For example, Windows 11’s Enhanced Phishing Protection stores a hashed version of the user’s Login Password inside a secure enclave so that it can evaluate whether recently typed text contains the user’s password, without exposing that secret hash to arbitrary code running on the PC.

- Derive protection from other mechanisms. For instance, there’s a Microsoft Web API that demands that every request bear a matching hash of the request parameters. An attacker could easily steal the hash function out of the client code. However, Microsoft holds a patent on the hash function. Any application which contains this code contains prima facie evidence of patent infringement, and Microsoft can pursue remedies in court. (Assuming a functioning legal system in the target jurisdiction, etc, etc).

- If the threat is from a compromised device but not a malicious user, enlist the user in helping to protect the secret. For example, reencrypt the data with a “main password” known only to the user, require off-device confirmation of credential use, etc. Locally-running malware will then have a limited opportunity to abuse the secret because it’s not always exposed.

-Eric

[1] try is the operative word here. From inside a VTL1 enclave, it’s very difficult to determine the identity of the calling process. Even if you can do so, it’s also non-trivial to ensure that the code running in the expected calling process wasn’t injected by malware. Stated differently, the API exposed by an enclave must be designed in such a way as to assume that it will be called by malicious VTL0 code. Using enclaves properly is tricky.