Last Update: November 29, 2023

Web browsers are notorious for being memory hogs, but this can be a bit misleading– in most cases, the memory used by the loaded pages accounts for the majority of memory consumption.

Unfortunately, some pages are not very good stewards of the system’s memory. One particularly common problem is memory leaks– a site establishes a fetch() connection to retrieve data from an endless stream of data coming from some webservice, then subsequently tries to hold onto the ever-growing response data forever.

Sandbox Limits

In Chromium-based browsers on Windows1 and Linux, a sandboxed 64-bit process’ memory consumption is bounded by a limit on the Windows Job object holding the process. For the renderer processes that load pages and run JavaScript, the limit was 4gb back in 2017 but now it can be as high as 16gb (UPDATE: 1TB as of 2023):

int64_t physical_memory = base::SysInfo::AmountOfPhysicalMemory();

...

if (physical_memory > 16 * GB) {

memory_limit = 16 * GB;

} else if (physical_memory > 8 * GB) {

memory_limit = 8 * GB;

}

If the tab crashes and the error page shows SBOX_FATAL_MEMORY_EXCEEDED, it means that the tab used more memory than permitted for the sandboxed process2.

The sandbox limits are so high that exceeding them is almost always an indication of a memory leak or JavaScript error on the part of the site.

Running out of memory

Beyond hitting the sandbox limits, a process can simply run out of memory– if it asks for memory from the OS and the OS says “Sorry, nope“, the process will typically crash.

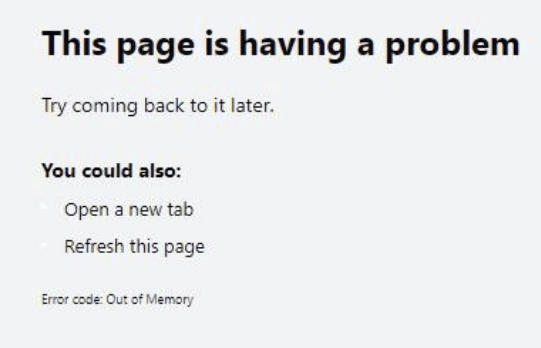

If the tab crashes, the error code will be rendered in the page:

Or, that’s what happens in the ideal case, at least.

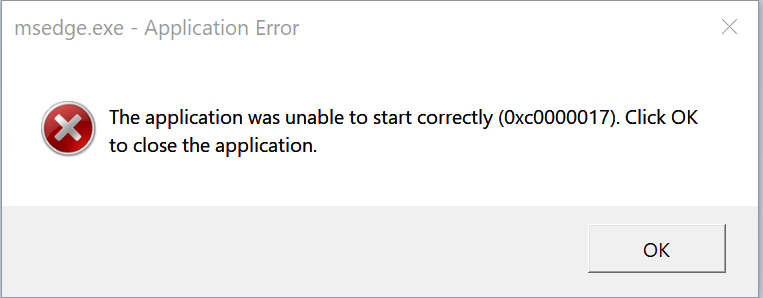

If your system is truly out of free memory, all sorts of things are likely to fail– random processes around the system will likely fall over, and the critical top-level Browser process itself might crash, blowing away all of your tabs.

In my case, the crash reporter itself crashes, leading to this unfriendly dialog:

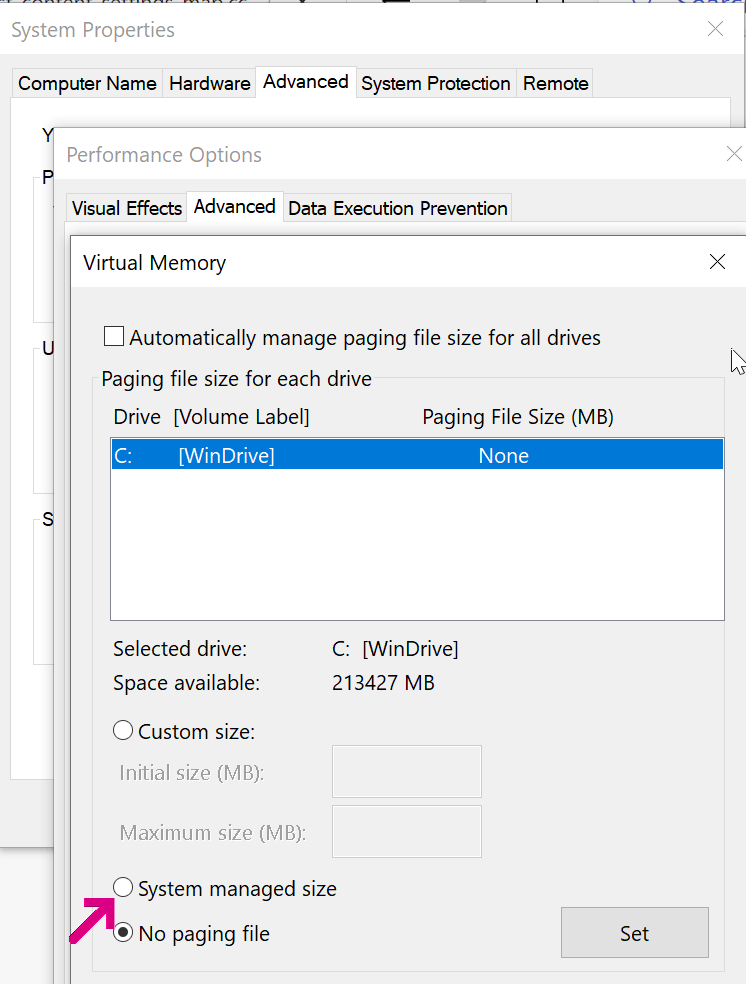

To make these sorts of catastrophic crashes less likely, allow Windows to manage the size of your page file.

Turning off OS page file as I had in the screenshot above means that when your last block of physical memory is exhausted, rather than slowing down, random processes on your system will fall over.

32bit Processes and Fragmentation

Notably, no sandbox limit is set for a 32bit browser instance; on 32-bit Windows, a 32bit process can almost always only allocate 2gb (std::numeric_limits::max() == 2147483647) before crashing with an OOM. For a 32-bit process running on 64bit Windows, a process compiled as LargeAddressAware (like Chromium) can allocate up to 4GB.

32-bit processes also often encounter another problem– even if you haven’t reached the 2gb process limit, it’s often hard to allocate more than a few hundred megabytes of contiguous memory because of address space fragmentation. If you encounter an “Out of Memory” error in a process that doesn’t seem to be using very much memory, visit chrome://version to ensure that you aren’t using a 32 bit browser.

Limits

Alex Gough from Chromium (subsequently updated by others) provided the following breakdown of other memory-related limits for Windows/Linux:

- Renderer

- Per-process – 1TB on Windows, was 8-64 GiB (based on system memory)

- V8 Isolate – Shared 4GB (reservation) per process3

- V8 code range – Shared 128 MiB per process3

- Wasm Memory – 4 GiB kV8MaxWasmMemoryPages

- Wasm Code Size – 4 GiB kMaxWasmCodeMemory

- Single partition_alloc allocation INT_MAX

- Per-slab allocation limit from allocator shims 2 GiB

- Web Assembly Memory “Cage” (128GB-1TB)

- OilPan Garbage Collector “Cages” (2 x 2GB cages)

- GPU

- 8-64 GiB (based on system memory)

- Network

- Other Utility Processes

- Browser

- None

Q: Wait, what’s a “cage”?

A: See the comment block here. The general idea is that there can be performance and security benefits to allocating objects in different ways than a simple memory allocation of the needed size for the object and the associated pointer to the address of that object. These strategies do, however, impact the size and number of objects that can be allocated.

Renderer Test Page and Tooling

I’ve built a Memory Use test page that allows you to use gobs of memory to see how your browser (and Operating System) reacts. Note that memory accounting is complicated and sneaky: ArrayBuffers aren’t considered JavaScript memory, and on Mac Chromium, they’re not backed by “real memory” until used.

You can use the Browser Task Manager (hit Shift+Esc on Windows or use Window > Task Manager on Mac) to see how much memory your tabs and browser extensions are using:

You can also use the Memory tab in the F12 Developer tools to peek at heap memory usage. Click the Take Snapshot button to get a peek at where memory is being used (and potentially wasted):

Using lots of memory isn’t necessarily bad– memory not being used is memory that’s going to waste. But you should always ensure that your web application isn’t holding onto data that it will never need again.

Chromium has a design doc on the challenges of accounting for memory: https://chromium.googlesource.com/chromium/src/+/HEAD/docs/memory/key_concepts.md.

Memory: Use it, but don’t abuse it.

-Eric

1 Due to platform limitations, Chromium on OS X does not limit the sandbox size.

2 The error code isn’t fully reliable; Chrome’s test code notes:

// On 64-bit, the Job object should terminate the renderer on an OOM.

// However, if the system is low on memory already, then the allocator

// might just return a normal OOM before hitting the Job limit.3 Starting with M92, the shared pointer compression cage means that all V8 Isolates in a given process share a common 4 GB reservation. This change was made in preparation for the shared structs proposal.