Earlier today, we looked at two techniques for attackers to evade anti-phishing filters by using lures that are not served from http and https urls that are subject to reputation analysis.

A third attack technique is to send a lure that entices a user to visit a legitimate site and perform an unsafe operation on that site. In such an attack, the phisher never collects the user’s password directly, and because the brunt of the attack occurs while on the legitimate site, anti-phishing filters typically have no way to block the attacks. I will present three examples of such attacks in this post.

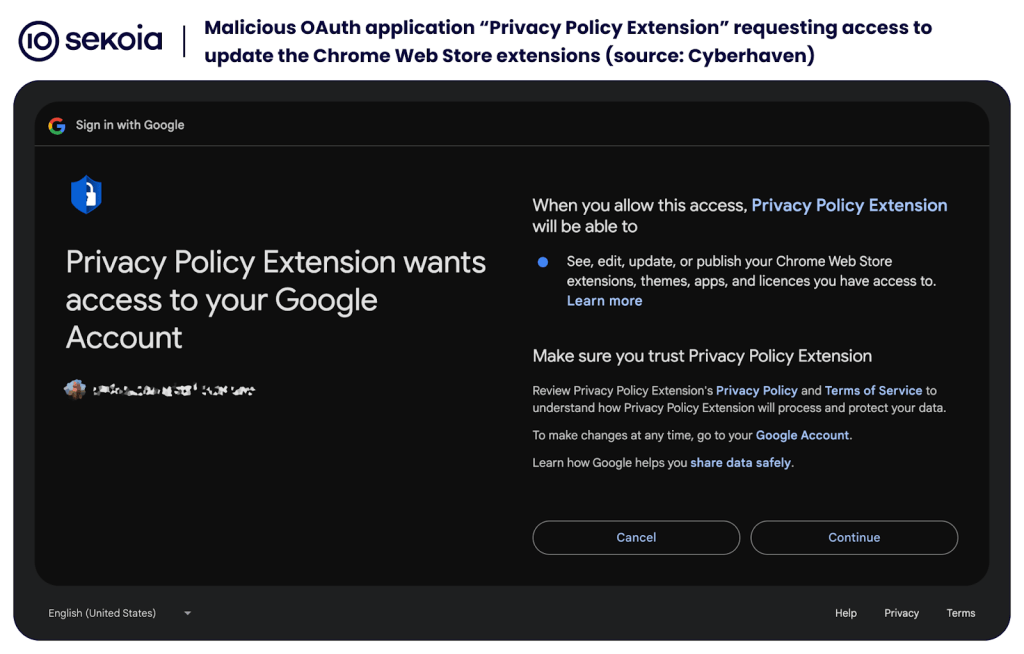

“Consent Phishing”

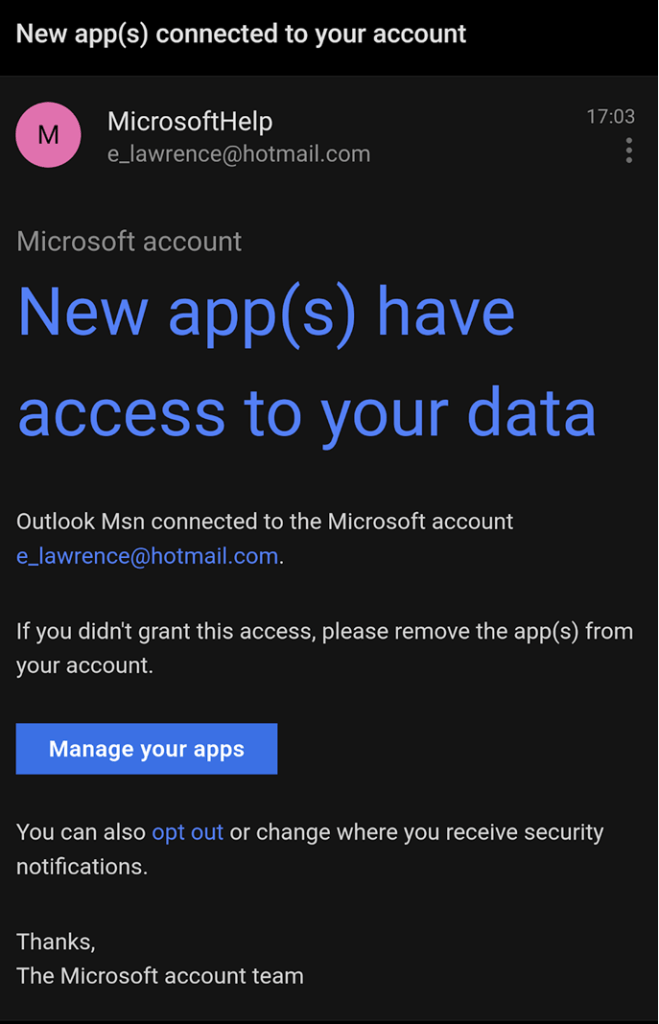

In the first example, the attacker sends their target an email containing lure text that entices the user to click a link in the email:

The attacker controls the text of the email and can thus prime the user to make an unsafe decision on the legitimate site, which the attacker does not control. In this case, clicking the link brings the victim to an account configuration page. If the user is prompted for credentials when clicking the link, the credentials are collected on the legitimate site (not a phishing URL), so anti-phishing filters have nothing to block.

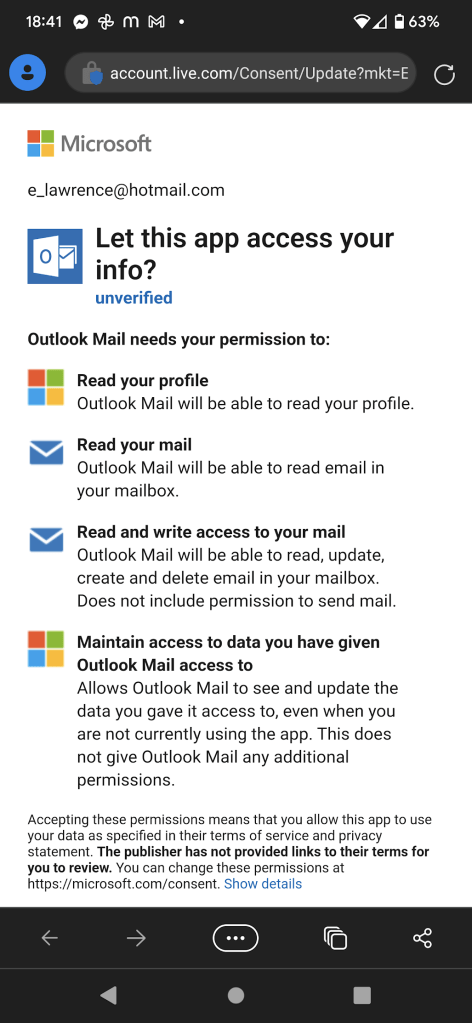

The attacker has very limited control over the contents of the account config page, but thanks to priming, the user is likely to make a bad decision, unknowingly granting the attacker access to the content of their account:

If access is granted, the attacker has the ability to act “as the user” when it comes to their email. Beyond sensitive content within the user’s email account, most sites offer password recovery options bound to an email address, and after compromising the user’s email account the attacker can likely pivot to attack their credentials on other sites.

This technique isn’t limited to Microsoft accounts, as demonstrated by this similar attack against Google:

… and this recent campaign against users of Salesforce.

“Invoice Scam”

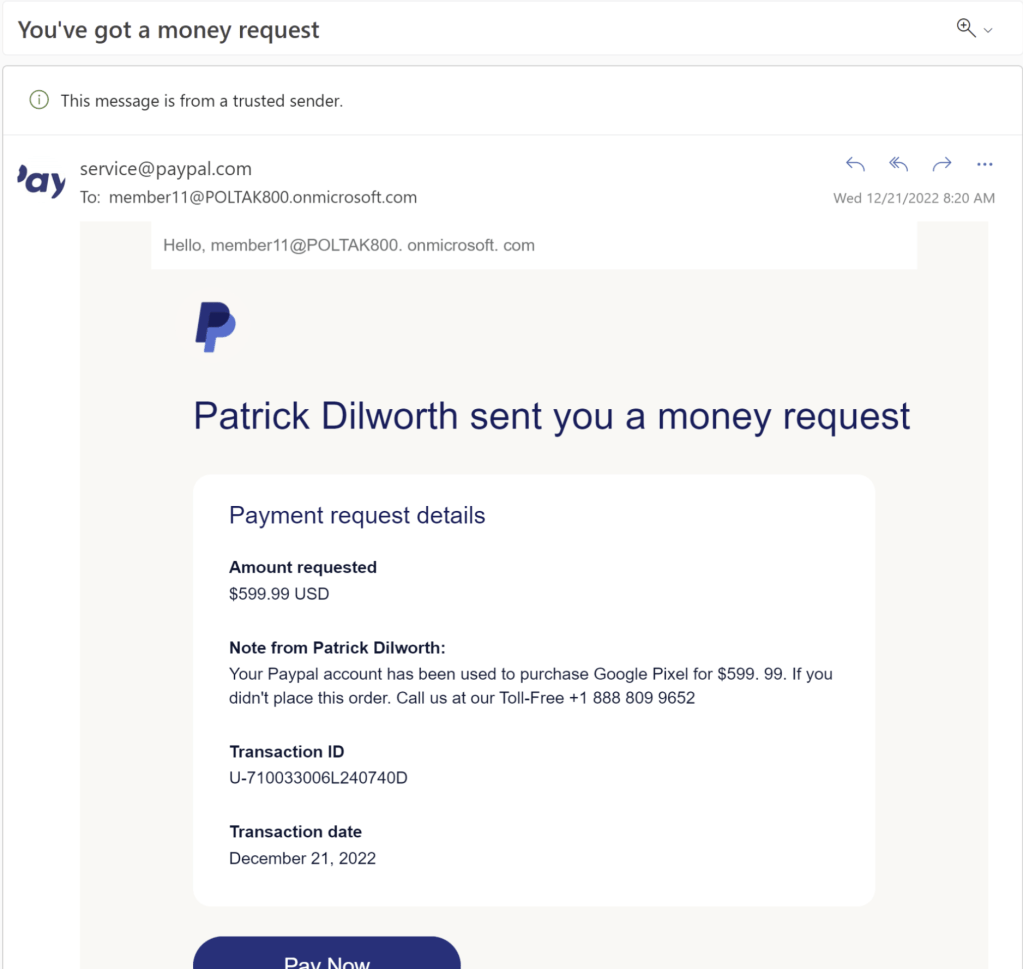

A second example is a long-running attack via sites like PayPal. PayPal allows people to send requests for money to one another, with content controlled by the attacker. In this case, the lure is sent by PayPal itself. As you can see, Outlook even notes that “This message is from a trusted sender” without the important caveat that the email also contains untrusted and inaccurate content authored by a malicious party.

A victim encountering this email may respond in one of two ways. First, they might pick up the phone and call the phone number provided by the attacker, and the attack would then continue via telephone– because the attack is now “offline”, anti-phishing filters will not get in the way.

Alternatively, a victim encountering the email might click on the link, which brings them to the legitimate PayPal website. Anti-phishing filters have nothing to say here, since the victim has been directed to the legitimate site (albeit with dangerous parameters). Perhaps alarmingly, PayPal has decided to “reduce friction” and automatically trust devices you’ve previously used, meaning that users might not even prompted for a password when clicking through the link:

Misleading trust indicators and the desire for simple transactions mean that a user is just clicks away from losing hundreds of dollars to an attacker.

“Malicious Extensions”

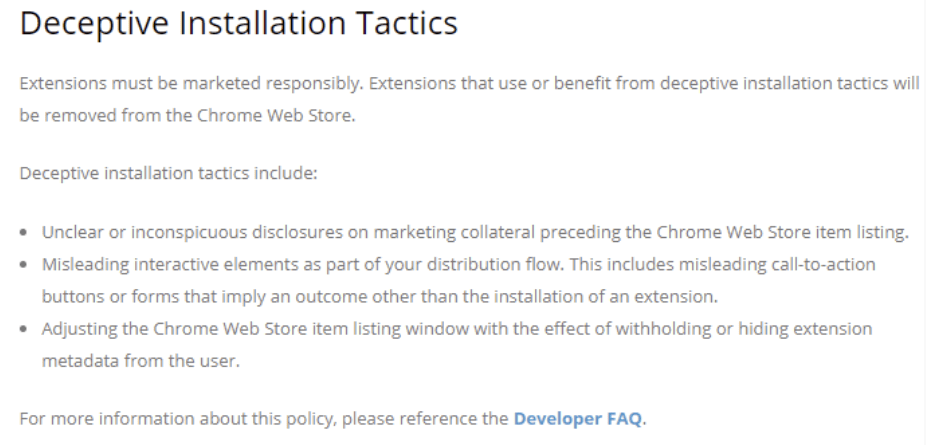

In the final example of a priming attack, a malicious website can trick the user into installing a malicious browser extension. This is often positioned as a security check, and often uses assorted trickery to try to prevent the user from recognizing what’s happening, including sizing and positioning the Web Store window in ways to try to obscure important information. Google explicitly bans such conduct in their policy:

… but technical enforcement is more challenging.

Because the extension is hosted and delivered by the “official” web store, the browser’s security restrictions and anti-malware filters are not triggered.

After a victim installs a malicious browser extension, the extension can hijack their searches, spam notifications, steal personal information, or embark upon other attacks until such time as the extension is recognized as malicious by the store and nuked from orbit.

Best Practices

When building web experiences, it’s important that you consider the effect of priming — an attacker can structure lures to confuse a user and potentially misunderstand a choice offered by your website. Any flow that offers the user a security choice should have a simple and unambiguous option for users to report “I think I’m being scammed“, allowing you to take action against abuse of your service and protect your customers.

If you’re an Entra administrator, you can configure your tenant to restrict individual users from granting consent to applications:

-Eric