For the first few years of the web, developers pretty much coded whatever they thought was cool and shipped it. Specifications, if written at all, were an afterthought.

Then, for the next two decades, spec authors drafted increasingly elaborate specifications with optional features and extensibility points meant to be used to enable future work.

Unfortunately, browser and server developers often only implemented enough of the specs to ensure interoperability, and rarely tested that their code worked properly in the face of features and data allowed by the specs but not implemented in the popular clients.

Over the years, the web builders started to notice that specs’ extensibility points were rusting shut– if a new or upgraded client tried to make use of a new feature, or otherwise change what it sent as allowed by the specs, existing servers would fail when they saw the encountered the new values. (Formally, this is called ossification).

In light of this, spec authors came up with a clever idea: clients should send random dummy values allowed by the spec, causing spec-non-compliant servers that fail to properly ignore those values to fail immediately. This concept is called GREASE (with the backronym “Generate Random Extensions And Sustain Extensibility“), and it was first implemented for the values sent by the TLS handshake:

When connecting to servers, clients would claim to support new ciphersuites and handshake extensions, and intolerant servers would fail. Users would holler, and engineers could follow up with the broken site’s authors and developers about how to fix their code. To avoid “breaking the web” too broadly, GREASE is typically enabled experimentally at first, in Canary and Dev channels. Only after the scope of the breakages is better understood does the change get enabled for most users. GREASE is now unconditionally enabled for all TLS handshakes in Chromium, and the browser does not offer any flags/overrides to turn it off.

GREASE has proven such a success for TLS handshakes that the idea has started to appear in new places. Last week, the Chromium project turned on GREASE for HTTP2 in Canary/Dev for 50% of users, causing connection failures to many popular sites, including some run by Microsoft. These sites will need to be fixed in order to properly load in the new builds of Chromium.

// Enable "greasing" HTTP/2, that is, sending SETTINGS parameters with reserved identifiers and sending frames of reserved types, respectively. If greasing Frame types, an HTTP/2 frame with a reserved frame type will be sent after every HEADERS and SETTINGS frame. The same frame will be sent out on all connections to prevent the retry logic from hiding broken servers.NETWORK_SWITCH(kHttp2GreaseSettings, "http2-grease-settings") NETWORK_SWITCH(kHttp2GreaseFrameType, "http2-grease-frame-type")

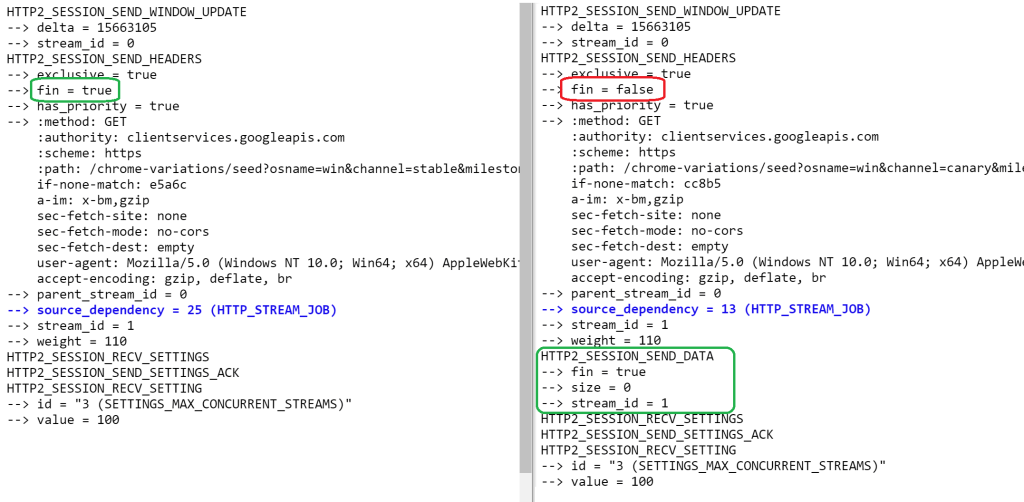

One interesting consequence of sending GREASE H2 Frames is that it requires moving the END_STREAM flag (recorded as fin=true in the netlog) from the HTTP2_SESSION_SEND_HEADERS frame into an empty (size=0) HTTP2_SESSION_SEND_DATA frame; unfortunately, the intervening GREASE Frame is not presently recorded in the netlog.

You can try H2 GREASE in Chrome Stable using command line flags that enable GREASE settings values and GREASE frames respectively:

chrome.exe --http2-grease-settings bing.com

chrome.exe --http2-grease-frame-type bing.com

Alternatively, you can disable the experiment in Dev/Canary:

chrome.exe --disable-features=Http2Grease

GREASE is baked into the new HTTP3 protocol (Cloudflare does it by default) and the new UA Client Hints specification (where it’s blowing up a fair number of sites). I expect we’ll see GREASE-like mechanisms appearing in most new web specs where there are optional or extensible features.

-Eric

PS: I’ve tried to apply GREASE principles to my life as well. When I first signed up for my Kilimanjaro trip, I worried that I wasn’t going to able to book time off from work 18 months in advance. Ultimately, I concluded that if when the time came, my employer couldn’t let me take time off for the trip of a lifetime, things had rusted badly enough that I’d have to quit anyway. As it turned out, I had no problems getting three weeks away, two for the hike and one to relax afterward.

Isn’t this sort of a subset of fuzz testing though? It’s still kinda awesome though and I hope this gets more popular. Along with concept like “be liberal in what you accept as input, strict in what you output” and “fail early, fail often”, and “always plan ahead for failures, they will happen”, “fail gracefully, recover/restart quickly” etc.

I always try to do “If” tests on input of procedures (or functions) and if the input value is not within bounds it’s either truncated (if this does not degrade the result or change the expected outcome of a function), or just outright fails and returns with and error code. Does does burn a few CPU cycles at the start of say a function, but with sanity checks and error handling out of the way early it’s much easier to setup a nice tight/efficient loop later.