The team recently got a false-negative report on the SmartScreen phishing filter complaining that we fail to block firstline-trucking.com. I passed it along to our graders but then took a closer look myself. I figured that maybe the legit site was probably at a very similar domain name, e.g. firstlinetrucking.com or something, but no such site exists.

Curious.

Simple Investigation Techniques

I popped open the Netcraft Extension and immediately noticed a few things. First, the site is a new site. Suspicious, since they claim to have been around since 2002. Next, the site is apparently hosted in the UK, although they brag about being “Strategically located at the U.S.-Canada border.” Sus... and just above that, they supply an address in Texas. Sus.

Let’s take a look at that address in Google Maps. Hmm. A non-descript warehouse with no signage. Sus.

Well, let’s see what else we have. Let’s go to the “About Us” page and see who claims to be employed here. Right-click the CEO’s picture and choose “Copy image link.”

Paste that URL into TinEye to see where else that picture appears on the web. Ah, it’s from a stock photo site. Very sus.

Investigating the other employee photos and customer pictures from the “Customer testimonials” section reveals that most of them are also from stock photo sites. The unfortunately-named “Marry Hoe” has her picture on several other “About us” pages — it looks like she probably came with the template. Her profile page is all Lorem Ipsum placeholder text.

I was surprised that one of the biggest photos on the site didn’t show up in TinEye at all. Then I looked at the Developer Tools and noticed that the secret is revealed by the image’s filename — ai-generated-business-woman-portrait. Ah, that’ll do it.

I tried searching for the phone number atop the site ((956) 253-7799) but there were basically no hits on Google. This is both very sus and very surprising, because often Googling for a phone number will turn up many complaints about scams run from that number.

Moar Scams!

Hmm…. what about all of those blog posts on the site. They’re not all lorem ipsum text. Hrm… but they do reference other companies. Maybe these scammers just lifted the text from some legit company? It seems plausible that “New England Auto Shipping” is probably a legit company they stole this from. Let’s copy this text and paste it into Google:

I didn’t find the source (likely neautoshipping.com, an earlier version of the scam from October 2024), but I did find another live copy of the attack, hosted on a similar domain:

This version is hosted at firstline-vehicle.com with the phone number (908-505-5378) and an address in New Jersey. They’ve literally been copy/pasting their scam around!

The page title of this scam site doesn’t match the scammers though. Hmm… What happens if I look for “Bergen Auto Logistics” then?

Another scam site, bergen-autotrans.com, this one registered this month and CEO’d by a Stock Photo woman:

There are some more interesting photos here, including some that are less obviously faked:

It looks like there was an earlier version of this site in November 2024 at bergenautotrans.com that is now offline:

Searching around, we see that there’s also currently a legit business in New York named “Bergen Auto” whose name and reputation these scammers may have been trying to coast off of. And now some of the pieces are starting to make more sense — Bergen New York is on the US/Canada border.

Searching for the string "Your car does not need be running in order to be shipped" turns up yet more copies of the scam, including britt-trucking.net with phone number (602) 399-7327:

Another random Stock Photo CEO is here, and our same General Manager now has a new name:

…and hey, look, it’s our old friends, now with a different logo on their shirts!

Interestingly, if you zoom in on the photo, you see that the name and logo don’t even match the scam site. The company logo and filename contain Sunni-Transportation, which was also found in the filename of Marry Hoe on the first site we looked at.

The same "Your car does not need be running in order to be shipped" string was also found on two now-offline sites, unitedauto-transport.com, and unitedautotrans.net.

Not a Phish, but definitely Fishy

I went back to our original complainant and asked for clarification — this site doesn’t seem to be pretending to be the site of any other company, but instead appears to be just entirely manufactured from AI and stock photos.

He explained that the attackers troll Craigslist[1] looking for folks buying used cars. They put up some fake listings, and then act as if the (fake) seller has chosen them as an escrow provider. After a bunch of paperwork, the victim buyer wires the attacker thousands of dollars for the nonexistent car. The attackers immediately send a fake tracking number that goes to an order tracking page that’s never updated. They’re abusing people who are risk-averse enough to seek out an escrow company to protect a big transaction, but who not able to validate the bonafides of that “escrow company”… aka, smart humans. (Having bought houses thrice, I can say that validating the legitimacy of an escrow company is a very difficult task). Escrow scams like this one are only one of several popular attacks — this guide and this one describe several scams and how to avoid them.

The Better Business Bureau had a writeup of vehicle escrow scams way back in 2020, and the FTC a year before. Reddit even has an automatic bot to explain the scam. In 2021, an Ohio man was sentenced to 14 years in prison for stealing over $10M via this sort of scam.

Unfortunately, creating a fake business almost entirely in pixels is a simple scam, and one that’s not trivial to protect against. In cases where no existing business’ reputation is being abused, there’s no organization that’s particularly incentivized to do the work to get the bad guys taken down. Phishing protection features like SafeBrowsing and SmartScreen are not designed to protect against “business practices scams.”

The very same things that make online businesses so easy to start — low overhead, no real-estate, templates and AIs can do the majority of the work — make it easy to invent fake businesses that only exist in the minds of their victims. After the scammers get found out, the sites disappear and the crooks behind them simply fade away.

I advised the reporter to report the fraud to the FTC, the Internet Crime Complaint Center, and also to Netcraft, who do maintain feeds of scam sites of all types, not just phishing/malware.

Stay safe out there!

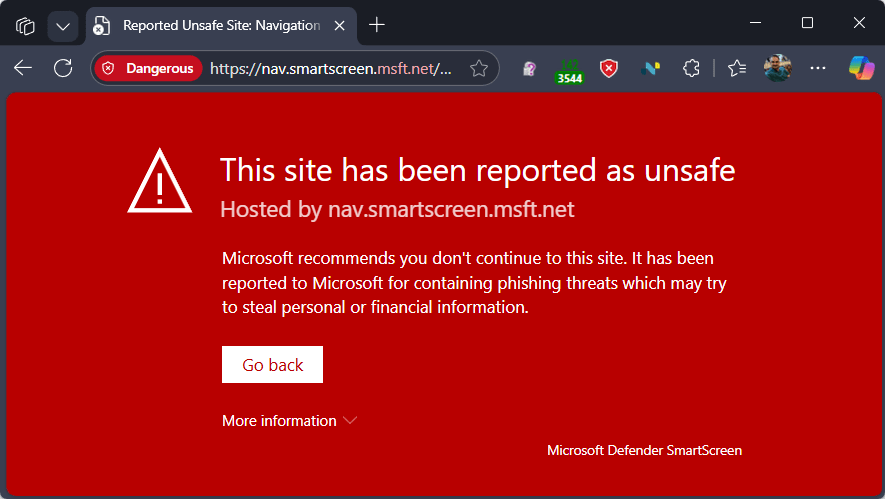

Update: As of September 12th, 2025, a new version of the escrow scam site is live:

https://fl-trans.com/They’re using the same mix of stock photos and slightly edited media as the earlier versions:

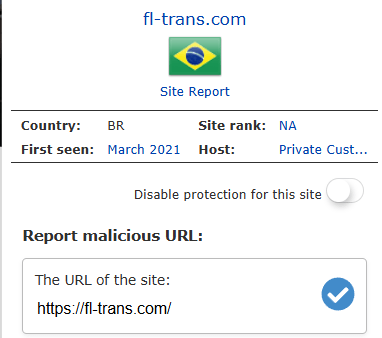

This one appears to be hosted in Brazil:

-Eric

PS: Holy cow. https://escrow-fraud.com/search.php

Looking through here, most of the sites are dead, but not all. Some have been live for years!

[1] In college, a friend fell victim to a different scam on Craigslist, the overpayment scam. They’d rented a 3 bedroom apartment and needed a 3rd roommate. They were contacted by an “international student” who needed a room and sent my friends a check $500 dollars larger than requested. “Oops, would you mind wiring back that extra? I really need it right now!” the scammer begged. My kind friends wired back the “overpayment” amount, and a few days later were heartbroken to discover that the original check had, of course, not actually cleared. They were out the $500, a huge sum for two broke young college students.

This same overpayment scam is used in fake car sales too.