While most HTTPS sites only authenticate the server (using a certificate sent by the website), HTTPS also supports a mutual authentication mode, whereby the client supplies a certificate that authenticates the visiting user’s identity. Such a certificate might be stored on a SmartCard, or used as a part of an OS identity feature like Windows Hello.

To request mutual authentication, servers send a CertificateRequest message to the client during the HTTPS handshake, specifying a criteria filter that the browser will use to find a client certificate to satisfy the server’s request.

If a client certificate is supplied in the browser’s Certificate response to the server’s challenge, the browser proves the user’s possession of that certificate using the private key that matches that client certificate’s public key.

A client may choose not to send a certificate (either because no matching certificate is available, or because the user declined to supply a certificate that it had)—in such cases, the server may terminate the handshake (showing a Client Certificate Required error message) or it may continue the handshake and attempt to authenticate the user via other means.

Certificate Selection

The CertificateRequest message allows the server to specify criteria for the certificates it is willing to accept from the client, including details such as the certificate’s issuer, and key/signature/hash types.

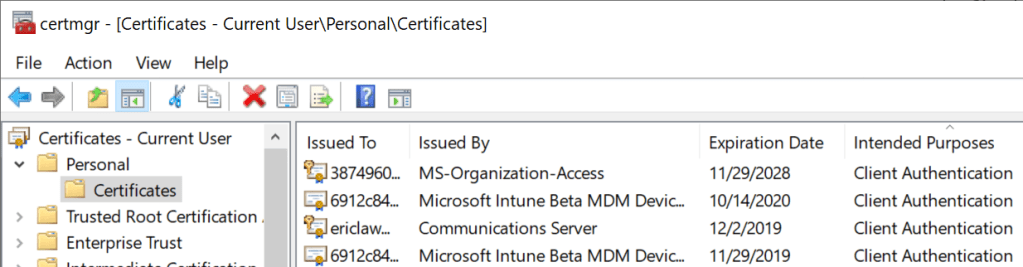

The browser consults the Operating System’s trust store (Keychain on Mac OS X, certmgr.msc on Windows) to find any candidate certificates (unexpired certificates with the Client Authentication purpose set and a private key available) that match the server-supplied filtering criteria:

The private key for a given certificate might be stored on a SmartCard — when a SmartCard is inserted, the certificate(s) on it are “virtually” propagated to the OS trust store for use by browsers and other applications.

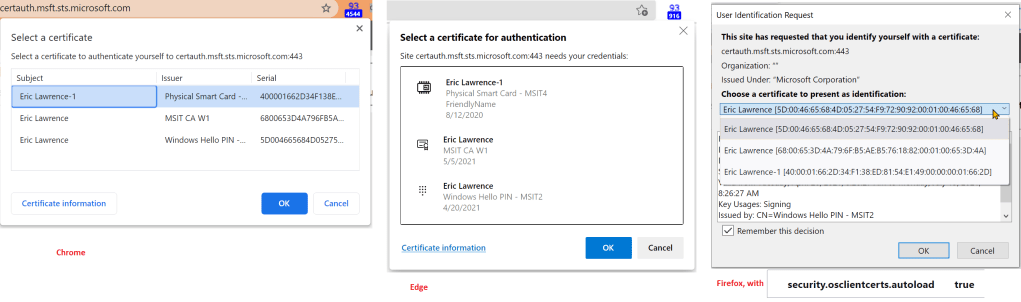

Certificates that meet the server’s filtering criteria are shown in a prompt:

If the user hits “Cancel”, the handshake is completed without sending a certificate. However, if the user selects a certificate, the browser caches that decision for the lifetime of the browser instance. The selected certificate will be resent on all new connections to the target origin and the prompt will not be shown again.

There used to be no good way to clear the selection decision, short of restarting the browser entirely. In contrast, legacy IE offered two very awkward mechanisms, the Clear SSLState button in the Internet Control Panel, and the ClearAuthenticationCache web API. (but keep reading)

Update: “Forget” feature in Edge 102

The Edge team is now testing a feature (which will likely be available by default starting in Edge 102) to allow the user to “forget” which Client Certificate was selected.

Selection UI Change in Edge 93

In Edge 92 and earlier, default focus was set on the Cancel button to help prevent the user from accidentally sending their certificate to a site they didn’t want to get it.

That meant that hitting Enter would thus submit “No Cert” (despite a blue highlight on the first certificate in the list). Sending NoCert to a server that needed it would likely result in an authentication failure that could not be fixed without completely restarting all browser windows, as mentioned previously. This was very bad and very easily hit.

Now, in Edge 93, no certificate is highlighted and the Cancel button is no longer focused. This helps ensure that the user makes an explicit selection.

Sending a certificate now requires two clicks, either:

- Click the desired certificate, then click the

Acceptbutton, or - Double-click the desired Cert.

Sending NoCert now requires one explicit click, on the Cancel button.

This UX change means users are much less likely to make an difficult-to-recover mistake. All in all, the new UX seems MUCH better.

Automatic Selection of Client Certificate

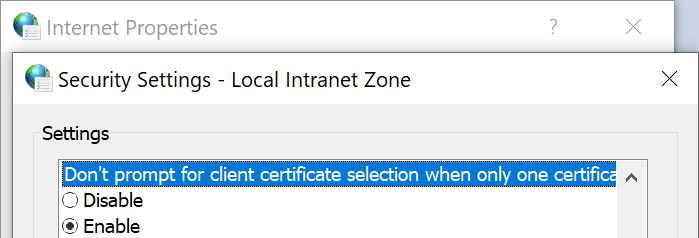

Internet Explorer and Edge Legacy offered a URLAction setting (Don’t prompt for client certificate selection when only one certificate exists, aka URLACTION_CLIENT_CERT_PROMPT), on-by-default for the Local Intranet Zone:

…whereby the browser would not prompt the user to select a certificate if the user only has one certificate that matches the server’s request. In such cases, the client would automatically send the matching certificate without showing a prompt.

For other zones, IE and Edge Legacy will prompt the user to select a certificate before any certificate is sent. This is a privacy measure, because if the browser silently sends the user’s identity to any website that asks for it, this is a “super-cookie” that would allow tracking that user’s identity across sites. Also, the client’s certificate might directly contain personally identifiable information about the user (e.g. their email address, office phone number, home address, etc).

Chromium (and thus Chrome, Edge, Brave, Opera, Vivaldi) largely does not use the concept of Zones, so instead the AutoSelectCertificateForUrls policy exists. This policy allows an IT administrator to configure clients to automatically send certificates to specified websites that request them, which can be used to satisfy the need to have, say, the user’s Windows Hello certificate sent to *.login.microsoft.com sites.

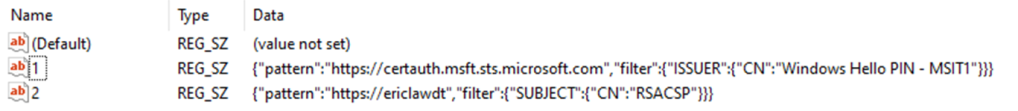

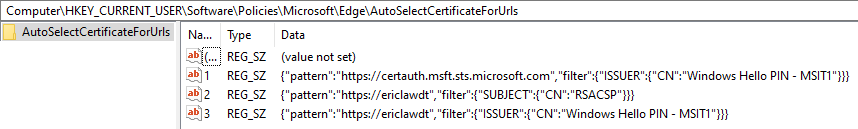

Here are two examples of policies: the first selects the first certificate issued by “Windows Hello PIN – MSIT1” and the second rule selects the certificate with a SubjectCN=”RSACSP”.

If you’re trying to set a rule whereby multiple client certificates are valid candidates and the client should just return the first match found, just add another rule with the same pattern and a different filter.

If you want to use a wildcard in the pattern, you must use the Content Settings Pattern syntax; e.g. https://[*.]example.com will match https://example.com and all of its subdomains. Chromium’s source documents the syntax as follows:

// - [*.]domain.tld (matches domain.tld and all sub-domains)

// - host (matches an exact hostname)

// - a.b.c.d (matches an exact IPv4 ip)

// - [a:b:c:d:e:f:g:h] (matches an exact IPv6 ip)

// - file:///tmp/test.html (a complete URL without a host)You may have noticed that the AutoSelectCertificateForUrls policy has one major limitation, which is that it always sends the user’s first matching certificate to the selected site. Some users might have more than one certificate that matches the policy (for instance, some enterprises have both “test” and “production” certificates.

For instance, this set of rules will use SubjectCN=”RSACP” if a matching certificate found, or a certificate with IssuerCN=”Windows Hello PIN – MSIT1” if not:

To address this shortcoming, the Edge team introduced a new policy in Edge 81. The new ForceCertificatePromptsOnMultipleMatches policy which does as it says: If the client has multiple certificates that could be used to satisfy the {OriginFilter->CertificateFilter} policy specified by a AutoSelectCertificateForUrls policy, instead of simply sending the first matching certificate, the browser will instead show a certificate selection prompt filtered to the certificates that match the policy.

Sidebar: Automatic Selection in WebView2

The WebView2 Control does not respect most Group Policies. Instead, WebView2 has added a ClientCertificateRequested API that allows apps to select the certificate rather than showing the dialog.

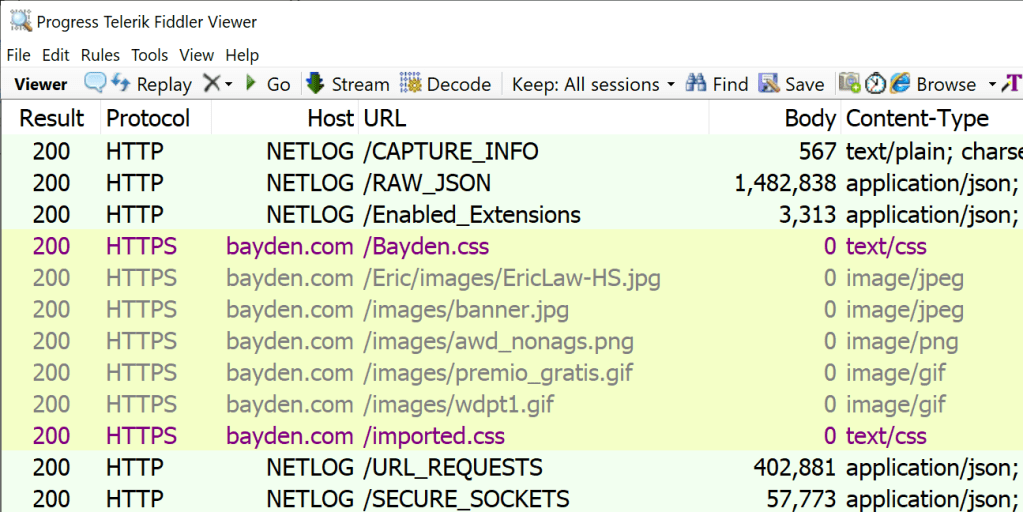

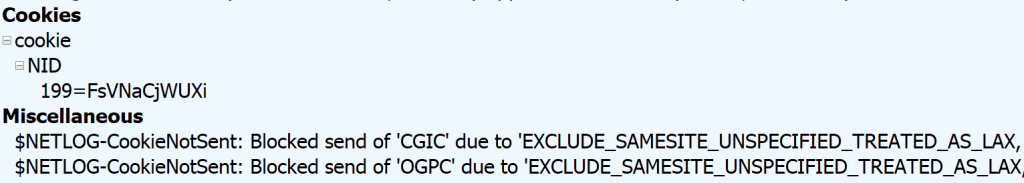

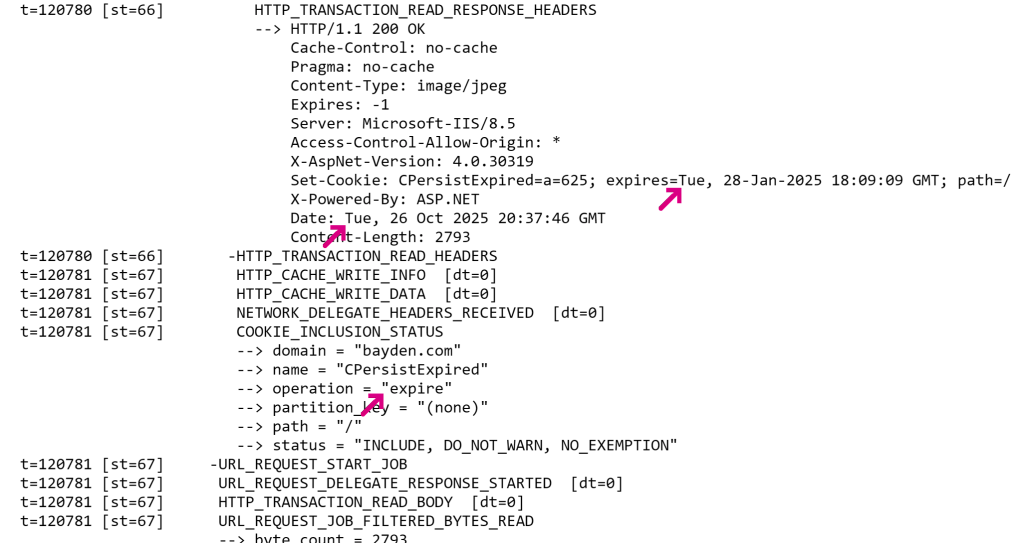

Debugging

If you find that Microsoft Edge shows a client certificate selection prompt in one scenario where other browsers do not, one possibility is that the site in question is not actually requesting a client certificate from those other browsers for some reason. For instance, some web authentication flows, including Microsoft’s AAD login, take the browser’s User-Agent into account when deciding what authentication mechanisms to use with the client.

In order to understand exactly what’s going on with Client Authentication, collect Network Traffic logs. SSL_HANDSHAKE_MESSAGE_RECEIVED messages of type 13 represent the client certificate request.

-Eric

Bonus trivia

- Some smart people persuasively argue that mutually-authenticated TLS is a misfeature.

- Registry-specified certificate selection policies apply across browser profiles, meaning that they are in force even when the user is in an Incognito browser session (!!)

- A user’s certificate selection apply for the entire browser session, making logout for ClientCert-authenticated sites a challenge (addressed in Edge 102).

- Client Certificate prompting behavior on Android is weird. Unfortunately, for platform reasons, the

AutoselectCertificateForUrlspolicy cannot be implemented there. - Client Certificate authentication is generally not available in 3rd-party browsers on iOS (Safari has access to the keychain).

- There are some test servers available.

The general notion of “how Client Certificates were supposed to work” was that each user would have one certificate for each organization to which they belong, issued by that organization’s root certificate. When visiting that organization’s servers, the server would send in the CertificateRequest message the identifier(s) of the root certificate(s) to which acceptable client certificates chain (using the certificate_authorities structure). The visiting client would then filter the certificates available for selection to only those that chain to that root (hopefully one certificate).

So, say I have two certificates, e.g. USA-NationalID and Microsoft-EmployeeID. When I visit https://portal.microsoft.com, Microsoft sends a CertificateRequest with MicrosoftRootCA in the certificate_authorities field. My browser automatically filters my client certificates list to just the Microsoft-EmployeeID certificate and then sends that. In contrast, when I visit https://irs.gov, the government site sends a CertificateRequest with a USGovernmentRootCA in the certificate_authorities field. My browser automatically filters my client certificates list to just the USA-NationalID certificate and sends that.

In practice, unfortunately, things haven’t worked out that way. Most organizations have not had the infrastructure or discipline to configure things to work like that, and as a consequence you end up with varying client behavior.

Firefox doesn’t seem to filter the certificate list, but it does offer a “Remember this decision” checkbox which presumably reduces user annoyance.

Firefox does not respect the Windows Trust Store, so each client certificate must be manually loaded into Firefox’s configuration (unless you set the security.osclientcerts.autoload preference to true). This is a hassle, but it tends to result in a somewhat “cleaner” experience where the user isn’t distracted by random certificates that might be cluttering Windows’ cert store.

Selection UI (as of June 2021)

Edge’s UI shows the certificate’s Friendly Name and includes an icon to try to help convey the type of client certificate (SmartCard, Windows Hello, Traditional).

In some cases, organizations are generating invalid client certificates but expecting them to work, leading to compat accommodations like the FEATURE_CLIENTAUTHCERTFILTER Feature Control Key.

In the browser, SmartCards can be used for two ways: HTTPS Client Certificate Authentication, and Windows Integrated Authentication.

- Straight TLS mutual authentication, as described above.

- Windows Integrated Authentication that occurs when visiting a website that sends a

WWW-Authenticate: Negotiate header. The client may automatically send the user’s login credentials (Intranet Zone). Or, if those creds do not work or the Zone is not configured for automatic credential release (Non-intranet), the user will be prompted for credentials to use. In Edge 79, the user would get a prompt with two blank fields (“Username” and “Password”). In Edge 80 or later, upon noticing that the user has configured Windows Hello, the user will be shown the Windows Hello auth dialog that allows the user to use their face, type a PIN, use a SmartCard, etc. So, now Edge 80 matches Edge Legacy (v18 and lower).

Low Level Details 1

Low Level Details 2

Nice discussion (with pictures) of setting up client cert auth on IIS.

In Windows 10 Apps, the AppContainer must have the sharedUserCertificates capability to use certificates from the trust store.

This is the first message the client can send after receiving a ServerHelloDone message. This message is only sent if the server requests a certificate. If no suitable certificate is available, the client MUST send a certificate message containing no certificates. That is, the certificate_list structure has a length of zero. If the client does not send any certificates, the server MAY at its discretion either continue the handshake without client authentication, or respond with a fatal handshake_failure alert. Also, if some aspect of the certificate chain was unacceptable (e.g., it was not signed by a known, trusted CA), the server MAY at its discretion either continue the handshake (considering the client unauthenticated) or send a fatal alert.

CertificateVerify signs a message using the client certificate’s private key, proving possession.

CertOpenStore “my” store

https://docs.microsoft.com/en-us/windows/desktop/api/wincrypt/nf-wincrypt-certopenstore

ClientAuthIssuer trust store.

Hard Problems: Fetch in Serviceworker scenario — how can the user select a certificate when no UI is allowed?