Authenticating to websites in browsers is complicated. There are numerous different approaches:

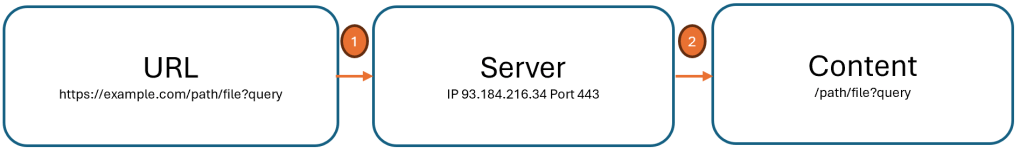

- the popular “Web Forms” approach, where username and password (“credentials”) are collected from a website’s Login page and submitted in a HTTPS

POST request

- credentials passed in all requests via standard

WWW-Authenticate HTTP headers

- TLS handshakes augmented by client certificates

- crypto-backed FIDO2/Passkeys via the WebAuthN API

Each of these authentication mechanisms has different user-experience effects and security properties. Sometimes, multiple systems are used at once, with, for example, a Web Forms login being bolstered by multifactor authentication.

In most cases, however, Authentication mechanisms are only used to verify the user’s identity, and after that process completes, the user is sent a “token” they may send in future requests in lieu of repeating the authentication process on every operation.

These tokens are commonly opaque to the client browser — the browser will simply send the token on subsequent requests (often in a HTTP cookie, a fetch()-set HTTP header, or within a POST body) and the server will evaluate the token’s validity. If the client’s token is missing, invalid, or expired, the server will send the user’s browser through the authentication process again.

Threat Model

Notably, the token represents a verified user’s identity — if an attacker manages to obtain that token, they can send it to the server and perform any operation that the legitimate user could. Obviously, then, these tokens must be carefully protected.

For example, tokens are often stored in HTTPOnly cookies to help limit the threat of a cross-site scripting (XSS) Attack — if an attacker manages to exploit a script injection inside a victim site, the attacker’s injected script cannot simply copy the token out of the document.cookie property and transmit it back to themselves to freely abuse. However, HTTPOnly isn’t a panacea, because a script injection can allow an attacker to use the victim’s browser as a sock puppet wherein the attacker simply directs the victim’s own browser to issue whatever requests are desired (e.g. “Transfer all funds in the account to my bitcoin wallet <x>“).

Beyond XSS attacks conducted from the web, there are two other interesting threats: local malware, and insider threats. Protecting against these threats is akin to trying to keep secrets from yourself.

In the malware case, an attacker who has managed to get malicious software running on the user’s PC can steal tokens from wherever they are stored (e.g. the cookie database, or even the browser processes memory at runtime) and transmit them back to themselves for abuse. Such attackers can also usually steal passwords from the browser’s password manager. However, stolen tokens could be more valuable than stolen passwords, because a given site may require multi-factor authentication (e.g. confirmation of logins from a mobile device) to use a password whereas a valid token represents completion of the full login flow.

In the insider threat scenario, an organization (commonly, a financial services firm) has employees that perform high value transactions from machines which are carefully secured, audited, and heavily monitored to ensure employee compliance with all mandated security protocols. In the case of an insider threat, whereby a rogue employee hopes to steal from their employer, the attacker may steal their own authentication token (or, better yet, a token from a colleague’s unlocked PC), and take that token to use on a different client that is not secured and monitored by the employer. By abusing the auth token from a different device, the attacker may evade detection long enough to abscond with their ill-gotten gains.

SaaS Expanded the Threat

In the old days of the 1990/2000s’ corporate environments, an attacker who stole a token from an Enterprise user had a limited ability to use it, because the enterprise’s servers were only available on the victim’s Intranet, not reachable from the public internet. Now, however, many enterprises mostly rely upon 3rd party software sold as a “service” that is available from anywhere on the Internet. An attacker who steals a token from a victim can abuse that token from anywhere in the world.

Root Cause

All of these threats have a common root cause: nothing prevents a token from being used in a different context (device/location) than the one in which it was issued.

Browser changes to raise the bar against local theft of cookies are (necessarily) of limited effectiveness, and always will be.

While some sites attempt to implement theft detection for their tokens (e.g. requiring the user reauthenticate and obtain a new token if the client’s IP address or geographic location changes), such protections are complex to implement and can result in annoying false positives (e.g. when a laptop moves from the office to the coffee shop or the like).

Similarly, organizations might use Conditional Access or client certificates to prevent a stolen token from being used from a machine not managed by the enterprise, but these technologies aren’t always easy to deploy. However, conditional access and client certificates point at an interesting idea: what if a token could be bound to the client that received it, such that the token cannot be used from a different client?

A Fix?

Update: The Edge team has decided to remove Token Binding starting in Edge 130.

Token binding, as a concept, has existed in multiple forms over the years, but in 2018, a set of Internet Standards was finalized to allow binding cookies to a single client. While the implementation was complex, the general idea is simple:

- Store a secret key on a client in a storage area that prevents it from being copied (“non-exportable”).

- Have the browser “bind” received cookies to that secret key, such that the cookies will not be accepted by the server if sent from another client.

Token binding had been implemented by Edge Legacy (Spartan) and Chromium, but unfortunately for this feature, it was ripped out of Chrome right around the time that the Standards were finalized, just as Microsoft replatformed Edge atop Chromium.

As the Edge PM for networking, I was left in the unenviable position of trying to figure out why this had happened and what to do about it.

I learned that in Chromium’s original implementation of token binding, the per-site secret was stored in a plain file directly next to the cookie database. This design would’ve mitigated the threat of token theft via XSS attack, but provided no protection against malware or insiders, which could steal the secrets file just as easily as stealing the cookie database itself.

To provide security against malware and insiders, the secret must be stored somewhere where it cannot be taken off the machine. The natural way to do that would be to use the Trusted Platform Module (TPM) which is special hardware designed to store “non-exportable” secrets. While interacting with the TPM requires different code on each OS platform, Chromium surmounts that challenge for many of its features. The bigger problem was that it turns out that some TPMs offer very low performance, and some pages could delay page load for dozens of seconds while communicating with the TPM.

Ultimately, the Edge team brought Token Binding back to the new Chromium-based Edge browser with two major changes:

- The secrets were stored using Windows 10’s Virtual Secure Mode, offering consistently high-performance, and

- Token-binding support is only enabled for administrator-specified domains via the AllowTokenBindingForUrls Group Policy

This approach ensured that Token Binding would be supported with high performance, but with the limitations that it was only supported on Win10+, and not as a generalized solution any website could use.

Even when these criteria are met, Token Binding provides limited protection against locally-running malware– while the attacker can no longer take the token off the box to abuse elsewhere, they can still use the victim PC as a sock puppet, driving a (hidden) browser instance to whatever URLs they like, abusing the bound token locally.

Beyond those limitations, Token Binding has a few other core challenges that make it difficult to use. First is that the web server frontend must include support for TB, and many did not. Second is that Token Binding binds the authentication tokens to the TLS connection to the server, making it incompatible with TLS-intercepting proxy servers (often used for threat protection). While such proxy servers are not very common, they are more common in exactly the sorts of highly-regulated environments where token binding is most desired. (An unimplemented proposal aimed to address this limit).

While token binding is a fascinating primitive to improve security, it’s very complex, especially when considering the deployment requirements, narrow support, and interactions with already-extremely-complicated changes on the way for cookies.

Update: The Edge team has decided to remove Token Binding starting in Edge 130.

What’s Next?

The Chrome team is experimenting with a new primitive called Device Bound Session Credentials. Read the explainer — it’s interesting! The tl;dr of the proposal is that the client will maintain a securely stored (e.g. on the TPM) private key, and a website can demand that the client prove its possession of that private key.

-Eric

PS: I’ve personally always been more of a fan of client certificates used for mutual TLS authentication (mTLS), but they’ve long been hampered by their own shortcomings, some of which have been mitigated only recently and only in some browsers. mTLS has made a lot of smart and powerful enemies over the decades. See some criticisms here and here.