I’ve written about Private Browsing Mode a lot previously, and I’ve written a bit about the behavior of “Session restore” previously, but one topic I haven’t covered is how “Sessions” work while in Private mode.

Session Sharing

Historically, one of the top-reported Private Mode issues was that users unexpectedly found that opening a new Private window showed that they were already logged into some site they had used earlier.

From one example issue: The typical explanation when users report issues like this is that the user has multiple Incognito windows open and does not realize that fact. Incognito windows (perhaps surprisingly) are not isolated from one another, and closing one Incognito window does not end the Incognito session. The “background” Incognito window hangs on to all of the login tokens and when you open a new Incognito window, all of those tokens remain available in that new window. Only when all Incognito windows are closed is the session ended and the login tokens expired.

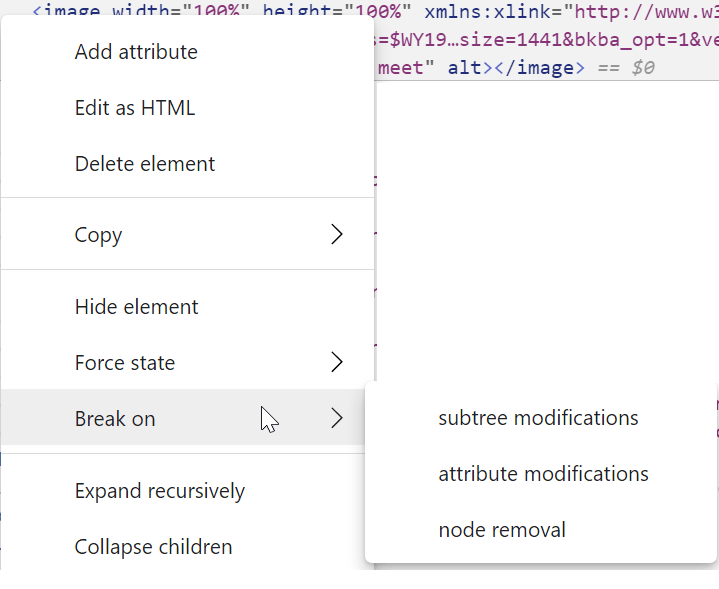

To address this, back in 2018 Chromium implemented an Incognito Window Counter to help the user understand when there are multiple windows in a single Incognito Browsing Session:

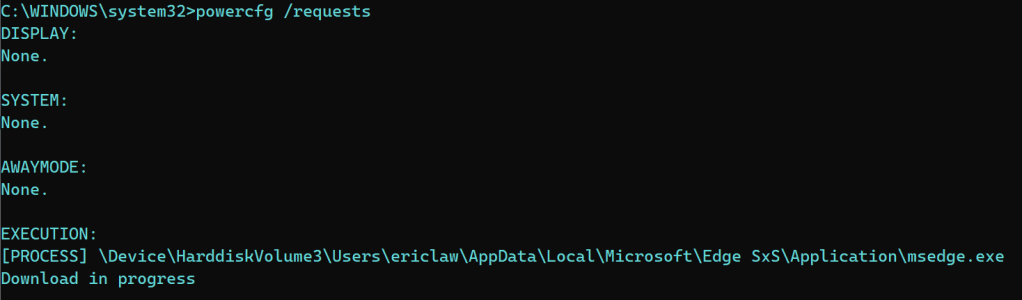

If there’s a number in parentheses after the “InPrivate” text, it means that there are more InPrivate windows open in the background. You’ll need to close all of them to end the InPrivate session.

Can We Isolate Each Private Window?

> Cant we just provide a real InPrivate window every time one is

> opened regardless of whether one is open or not already??

Offering “N-isolated InPrivate Windows” (Issue 1024731) is an occasionally-requested feature, but satisfying it would have some tricky subtleties.

In theory, yes, software’s just bits and we can code them any way we want—IE8 exposed an explicit “New Session” command, for instance. So we could make it such that every invocation of “New InPrivate Window” creates a new and isolated Web Session.

Implementing “N-isolated InPrivate windows” has two significant hurdles:

- Code – Chromium is presently designed with the idea that there’s a maximum of one InPrivate session per profile. We’d have to carefully trace through every use of the active Profile to create new partitions supporting “N-isolated InPrivate Sessions”, and figure out how UX features like tearing tabs out from an isolated window ought to behave.

- UX – Users might not really want every InPrivate window to be isolated from every other InPrivate window. For instance, if you have a website InPrivate and it opens a popup, you probably need that popup to be in the same Session as the parent window, or the flow (script access, any login cookies, etc) is going to break. In a world of “N-isolated InPrivate Sessions”, if you don’t bind the Session tightly to the window, you need to find some way to allow the user to distinguish which windows belong to which Sessions (to ensure, for instance, that closing the last InPrivate window in that isolated Session cleans up exactly the expected state).

Now, in a world of tabbed browsing where most site-initiated popups are automatically created as new tabs in the same window, perhaps we could just punt on the hard UX challenges and decree that every top-level InPrivate Window is isolated to only itself (and e.g. forbid tearing tabs out of that window). It’s hard to say whether the total cost of such a feature would justify the user-perceived benefit.

Rather than using InPrivate for all scenarios, you might also choose to create extra “ephemeral” profiles that throw away all cookies/cache/credentials/etc every time they’re closed, or you can use the existing-by-default “Guest” account for the same purpose.

-E

PS: Long ago, I built a Web Sessions test page in case you’d like to explore the behavior described in this post.