Last Update: October 1, 2025

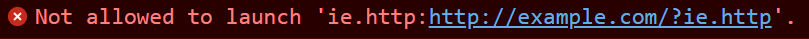

For security reasons, Microsoft Edge 76+ and Chrome impose a number of restrictions on file:// URLs, including forbidding navigation to file:// URLs from non-file:// URLs.

If a browser user clicks on a file:// link on an https-delivered webpage or PDF, nothing visibly happens. If you open the Developer Tools console on the webpage, you’ll see a note: “Not allowed to load local resource: file://host/page“.

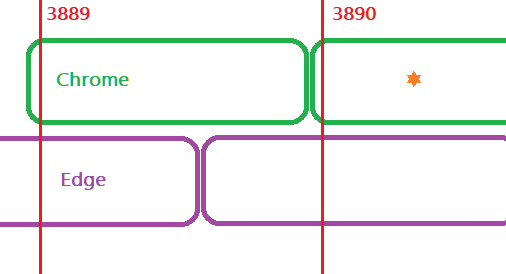

In contrast, Edge18 (like Internet Explorer before it) allowed pages in your Intranet Zone to navigate to URLs that use the file:// url protocol; only pages in the Internet Zone were blocked from such navigations1.

No option to disable this navigation blocking is available in Chrome or Edge 76+, but (UPDATE) a Group Policy IntranetFileLinksEnabled was added to Edge 95+.

What’s the Risk?

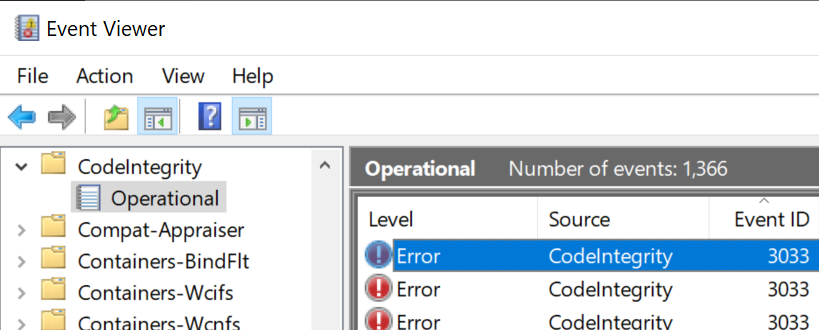

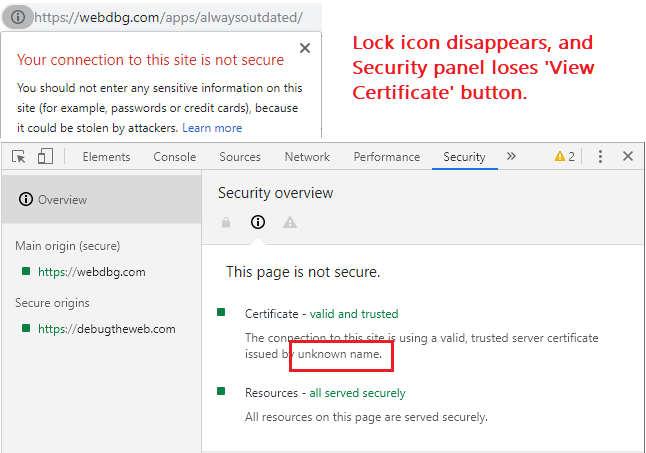

The most obvious problem is that the way Windows retrieves content from file:// can result in privacy and security problems. Because Windows will attempt to perform Single Sign On (SSO), fetching remote resources over SMB (the file: protocol) can leak your user account information (username) and a hash of your password to the remote site. This is a long-understood threat, with public discussions in the security community dating back to 1997.

What makes this extra horrific is that if you log into Windows using an MSA account, the bad guy gets both your global userinfo AND a hash he can try to crack1. Crucially, SMB SSO is not restricted by Windows Security Zone as HTTP/HTTPS SSO is:

An organization that wishes to mitigate this attack can drop SMB traffic at the network perimeter (gateway/firewall), disable NTLM or SMB, or use a new feature in Windows 11 to prevent the leak.

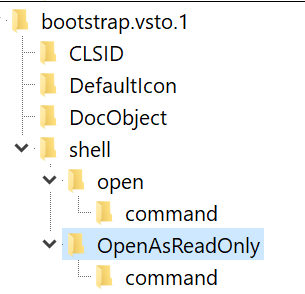

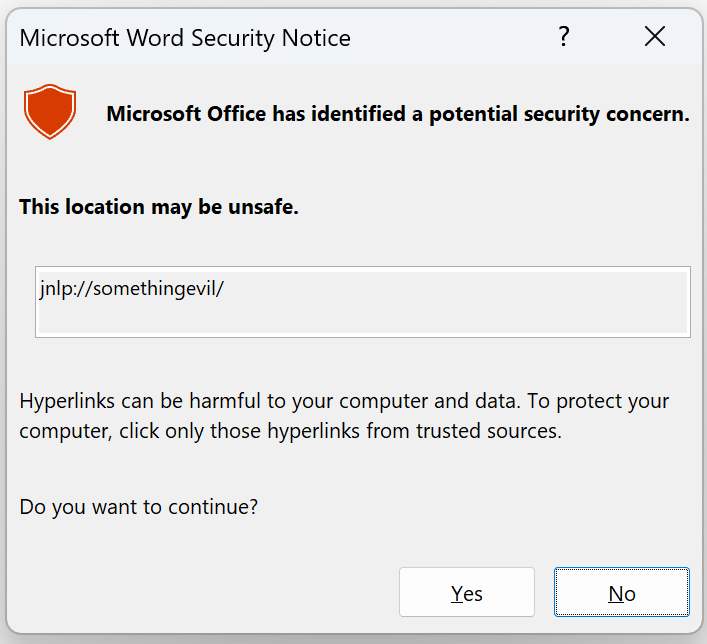

Beyond the data leakage risks related to remote file retrieval, other vulnerabilities related to opening local files also exist. Navigating to a local file might result in that file opening in a handler application in a dangerous or unexpected way. The Same Origin Policy for file URLs is poorly defined and inconsistent across browsers (see below), which can result in security problems.

Workaround: IE Mode

Enterprise administrators can configure sites that must navigate to file:// urls to open in IE mode. Like legacy IE itself, IE mode pages in the Intranet zone can navigate to file urls.

Workaround: Extensions

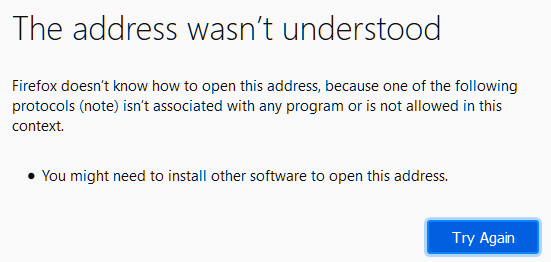

Unfortunately, the extension API chrome.webNavigation.onBeforeNavigate does not fire for file:// links that are blocked in HTTP/HTTPS pages, which makes working around this blocking via an extension difficult.

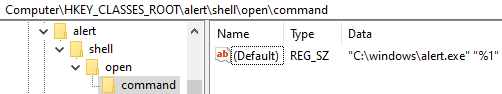

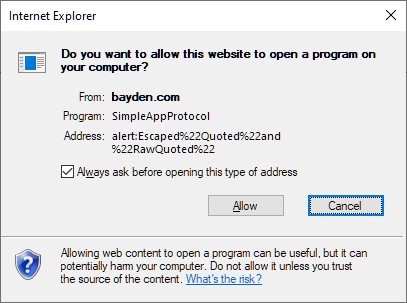

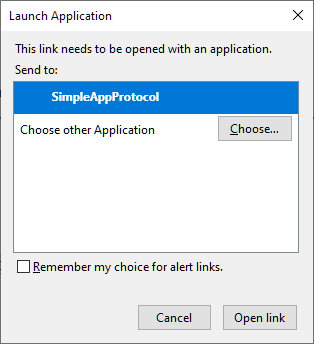

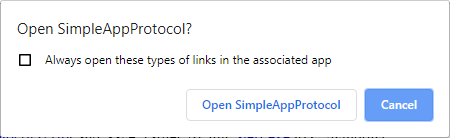

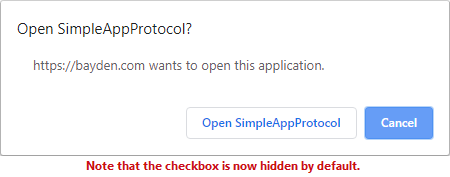

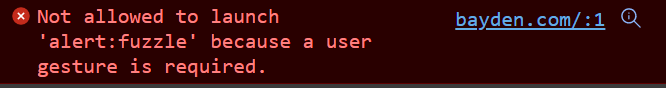

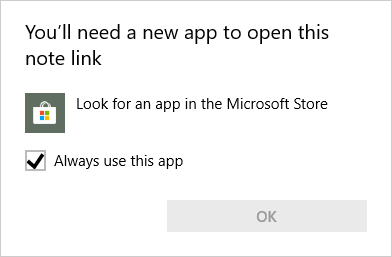

One could write an extension that uses a Content Script to rewrite all file:// hyperlinks to an Application Protocol handler (e.g. file-after-prompt://) that will launch a confirmation dialog before opening the targeted URL via ShellExecute or explorer /select,”file://whatever”, but this would entail the extension running on every page, which has non-zero performance implications. It also wouldn’t fix up any non-link file navigations (e.g. JavaScript that calls window.location.href=”file://whatever”).

Similarly, the Enable Local File Links extension simply adds a click event listener to every page loaded in the browser. If the listener observes the user clicking on a link to a file:// URL, it cancels the click and directs the extension’s background page to perform the navigation to the target URL, bypassing the security restriction by using the extension’s (privileged) ability to navigate to file URLs. But this extension will not help if the page attempts to use JavaScript to navigate to a file URI, and it exposes you to the security risks described above.

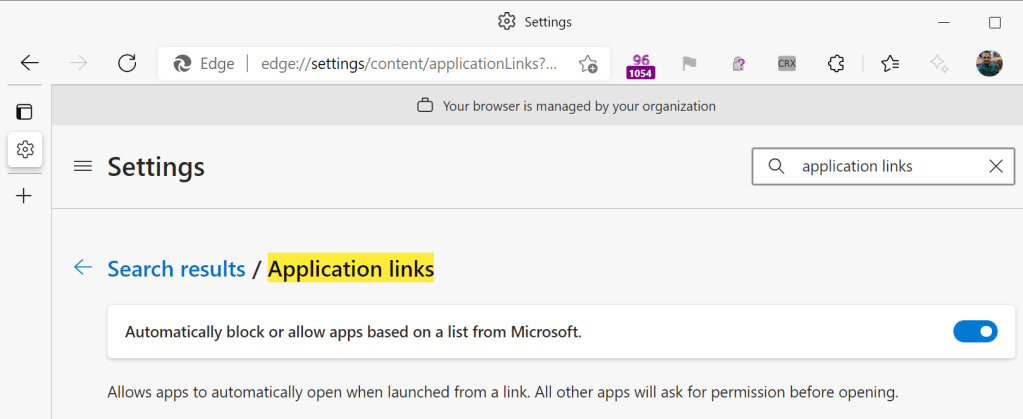

Workaround: Edge 95+ Group Policy

A Group Policy (IntranetFileLinksEnabled) was added to Edge 95+ that permits HTTPS-served sites on your Intranet to open Windows Explorer to file:// shares that are located in your Intranet Zone. This scenario does not accommodate all scenarios, but allows you to configure Edge to behave more like Internet Explorer did.

Necessary but not sufficient

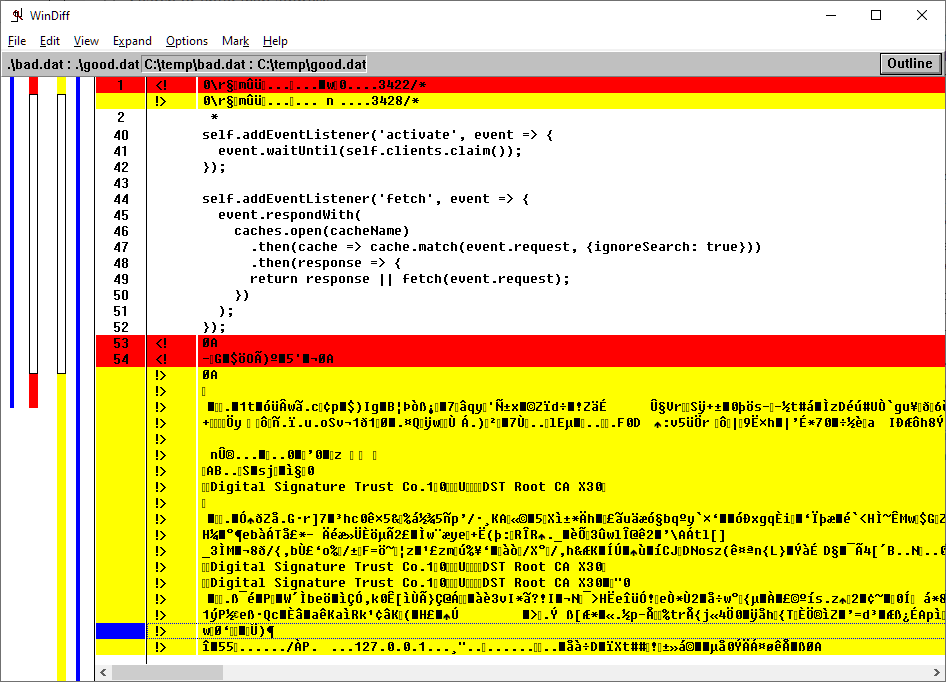

Unfortunately, blocking file:// urls in the browser is a solid security restriction, but it’s not complete. There are myriad formats which have the ability to hit the network for file URIs, ranging from Office documents, to emails, to media files, to PDF, MHT, SCF files, etc, and most of them will do so without confirmation. Raymond Chen explores this in Subtle ways your program can be Internet-facing.

In an enterprise, the expectation is that the Organization will block outbound SMB at the firewall. When I was working for Chrome and reported this issue to Google’s internal security department, they assured me that this is what they did. I then proved that they were not, in fact, correctly blocking outbound SMB for all employees, and they spun up a response team to go fix their broken firewall rules. In a home environment, the user’s router may or may not block the outbound request.

Various policies are available to better protect credential hashes, but I get the sense that they’re not broadly used.

Navigation Restrictions Aren’t All…

Subresources

This post mostly covers navigating to file:// URLs, but another question which occasionally comes up is “how can I embed a subresource like an image or a script from a file:// URL into my HTTPS-served page.” This, you also cannot do, for security and privacy reasons (we don’t want a web page to be able to detect what files are on your local disk). Blocking file:// embeddings in HTTPS pages can even improve performance.

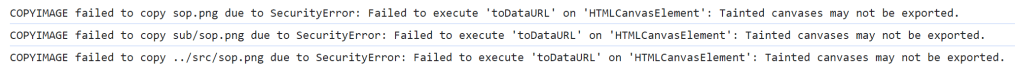

Chromium and Firefox allow HTML pages served from file:// URIs to load (execute) images, regular (non-Worker) scripts, and frames from any file same path. However, modern browsers treat file origins as unique, blocking simple read of other files via fetch(), DOM interactions between frames containing different local files, or getImageData() calls on canvases with images loaded from other local files:

Legacy Edge (v18) and Internet Explorer are the only browsers that consider all local-PC file:// URIs to be same-origin, allowing such pages to refer to other HTML resources on the local computer. (This laxity is one reason that IE has a draconian “local machine zone lockdown” behavior that forbids running script in the Local machine zone). Internet Explorer’s “SecurityID” for file URLs is made up of three components (file:servername/sharename:ZONEID).

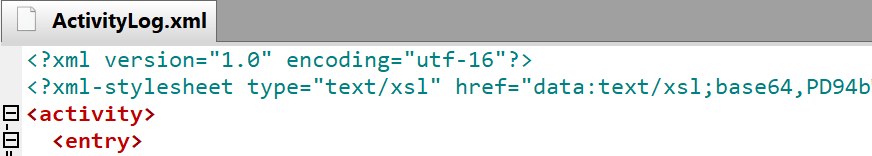

In contrast, Chromium’s Same-Origin-Policy treats file: URLs as unique origins, which means that if you open an XML file from a file: URL, any XSL referenced by the XML is not permitted to load and the page usually appears blank, with the only text in the Console ('file:' URLs are treated as unique security origins.) noting the source of the problem.

This behavior impacts scenarios like trying to open Visual Studio Activity Log XML files and the like. To workaround the limitation, you can either embed your XSL in the XML file as a data URL:

…or you can transform the XML to HTML by applying the XSLT outside of the browser first.

…or you can launch Microsoft Edge or Chrome using a command line argument that allows such access:

msedge.exe --allow-file-access-from-files

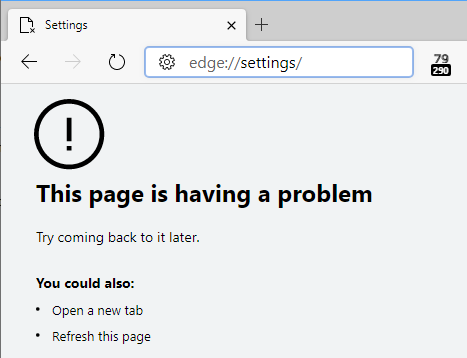

Note: For this command-line flag (and most others) to take effect, all Edge instances must be closed; check Task Manager. Edge’s “Startup Boost” feature means that there’s often a hidden msedge.exe instance hanging around in the background. You can visit edge://version in a running Edge window to see what command-line arguments are in effect for that window.

However, please note that this flag doesn’t remove all restrictions on file, and only applies to pages served from file origins.

Test Case

Consider a page loaded from a file on disk that contains three IFRAMEs to text files (one in the same folder, one in a sub folder, and one in a different folder altogether), three fetch() requests for the same URLs, and three XmlHttpRequests for the same URLs. In Chromium and Firefox, all three IFRAMEs load.

However, all of the fetch and XHR calls are blocked in both browsers:

If we then relaunch Chromium with the --allow-file-access-from-files argument, we see that the XHRs now succeed but the fetch() calls all continue to fail*.

*UPDATE: This changed in Chromium v99.0.4798.0 as a side-effect of a change to support an extensions scenario. The fetch() call is now allowed when the command-line argument is passed.

With this flag set, you can use XHR and fetch to open files in the same folder, parent folder, and child folders, but not from a file:// url with a different hostname.

In Firefox, setting privacy.file_unique_origin to false allows both fetch and XHR to succeed for the text file in the same folder and a subfolder, but the text file in the unrelated folder remains forbidden.

Extensions

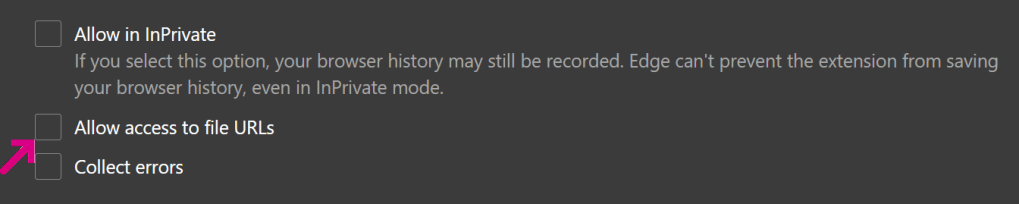

By default, extensions are forbidden to run on file:// URIs. To allow the user to run extensions on file:-sourced content, the user must manually enable the option in the Extension Options page:

When this permission is not present, you’ll see a console message like:

extension error injecting script :

Cannot access contents of url "file:///C:/file.html". Extension manifest must request permission to access this host.

Historically, any extension was allowed to navigate a tab to a file:/// url, but this was changed in late 2023 to require that the extension’s user tick the Allow access to file URLs checkbox within the extension’s settings.

Bugs

While Chrome attempts to include a file:// URL’s path in its Same Origin Policy computation, it’s currently ignored for localStorage calls, one of the oldest active security bugs in Chromium. This bug means that any HTML file loaded from an file:/// can read or overwrite the localStorage data from any other file:///-loaded page, even one loaded from a different internet host.

-Eric

1 Interestingly, some alarmist researchers didn’t realize that file access was allowed only on a per-zone basis, and asserted that IE/Edge would directly leak your credentials from any Internet web page. This is not correct. It is further incorrect in old Edge (Spartan) because Internet-zone web pages run in Internet AppContainers, which lack the Enterprise Authentication permission, which means that the processes don’t even have your credentials.

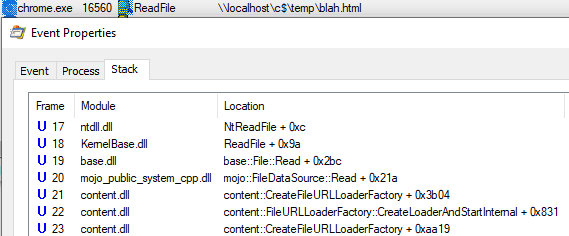

Under the hood

Under the hood, Chromium’s file protocol implementation is pretty simple. The FileURLLoader handles the file scheme by converting the file URI into a system file path, then uses Chromium’s base::File wrapper to open and read the file using the OS APIs (in the case of Windows, CreateFileW and ReadFile).